Take Control: Customer-Managed Keys for Lakebase Postgres

- 文章截断严重,缺乏对Lakebase Postgres客户密钥管理机制的任何技术描述。

- 无法评估功能价值或实现方式,因正文内容缺失,信息密度极低。

- 疑似为营销页框架,未包含架构、配置、安全模型或使用案例等工程师关心内容。

Take Control: Customer-Managed Keys for Lakebase Postgres | Databricks Blog

[](http://www.databricks.com/)

[](http://www.databricks.com/)

- Why Databricks

- * Discover

- Customers

- Partners

- Product

- * Databricks Platform

- Integrations and Data

- Pricing

- Open Source

- Solutions

- * Databricks for Industries

- Cross Industry Solutions

- Migration & Deployment

- Solution Accelerators

- Resources

- * Learning

- Events

- Blog and Podcasts

- Get Help

- Dive Deep

- About

- * Company

- Careers

- Press

- Security and Trust

- DATA + AI SUMMIT

1. Blog 2. / Product 3. / Article

- * *

Contents in this story

The Architecture of Lakebase Encryption

- The Architecture of Lakebase Encryption

- The Key Hierarchy

- CMK in Practice: Storage and Compute

- Implementing CMK in the Lakebase Workflow

- Security Auditability

- Get Started with Enhanced Data Sovereignty

Take Control: Customer-Managed Keys for Lakebase Postgres

Encryption across storage and compute with revocation control

Published: April 20, 2026

Product4 min read

by Ben Hagan

Share this post

- [](https://www.linkedin.com/shareArticle?mini=true&url=https://www.databricks.com/blog/take-control-customer-managed-keys-lakebase-postgres&summary=&source=)

- [](https://twitter.com/intent/tweet?text=https://www.databricks.com/blog/take-control-customer-managed-keys-lakebase-postgres)

- [](https://www.facebook.com/sharer/sharer.php?u=https://www.databricks.com/blog/take-control-customer-managed-keys-lakebase-postgres)

Keep up with us

Subscribe

#### Summary

- Lakebase CMK enables customer control over encryption keys, allowing you to manage keys in your own cloud KMS rather than relying on Databricks-managed defaults.

- Secure the entire data lifecycle by encrypting both long-term storage and ephemeral compute caches

- Use your KMS as a technical "kill switch" to render data cryptographically inaccessible and terminate active compute instances, providing a failsafe for high-compliance Postgres workloads.

Encryption at rest is a cloud baseline, but for enterprises operating in highly regulated environments, organizations must control the root of trust. Lakebase Customer Managed Keys (CMK) delivers this control by allowing you to use your own encryption keys from your Key Management Service (KMS) e.g. AWS KMS, Azure Key Vault, or Google Cloud KMS - to protect and manage data across the entire Lakebase lifecycle.

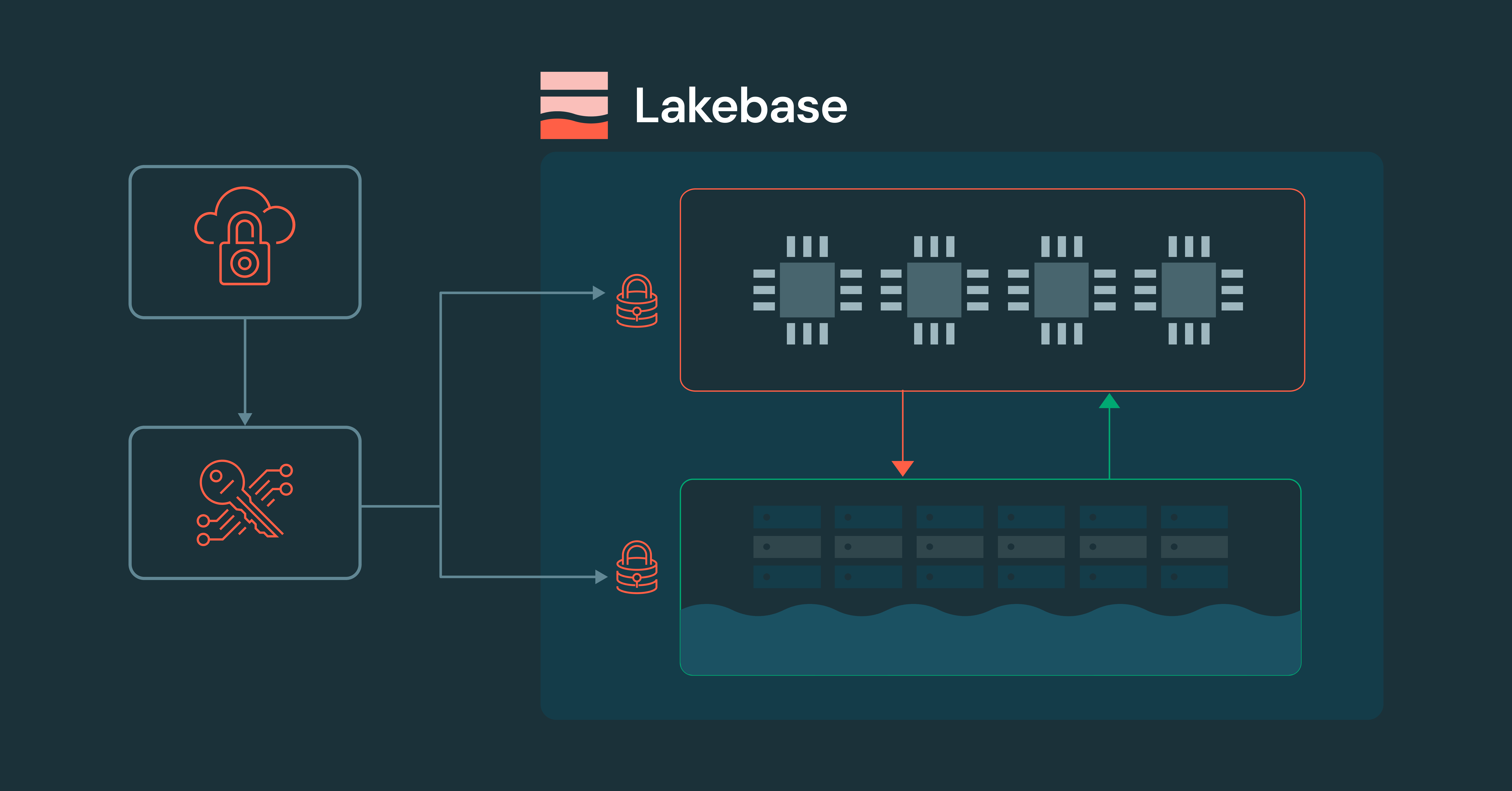

Lakebase Customer-Managed Keys (CMK) offers comprehensive management and control across the entire architecture, unlike conventional managed databases. While traditional databases typically only encrypt storage, Lakebase CMK manages both persistent storage and ephemeral compute.

The Architecture of Lakebase Encryption

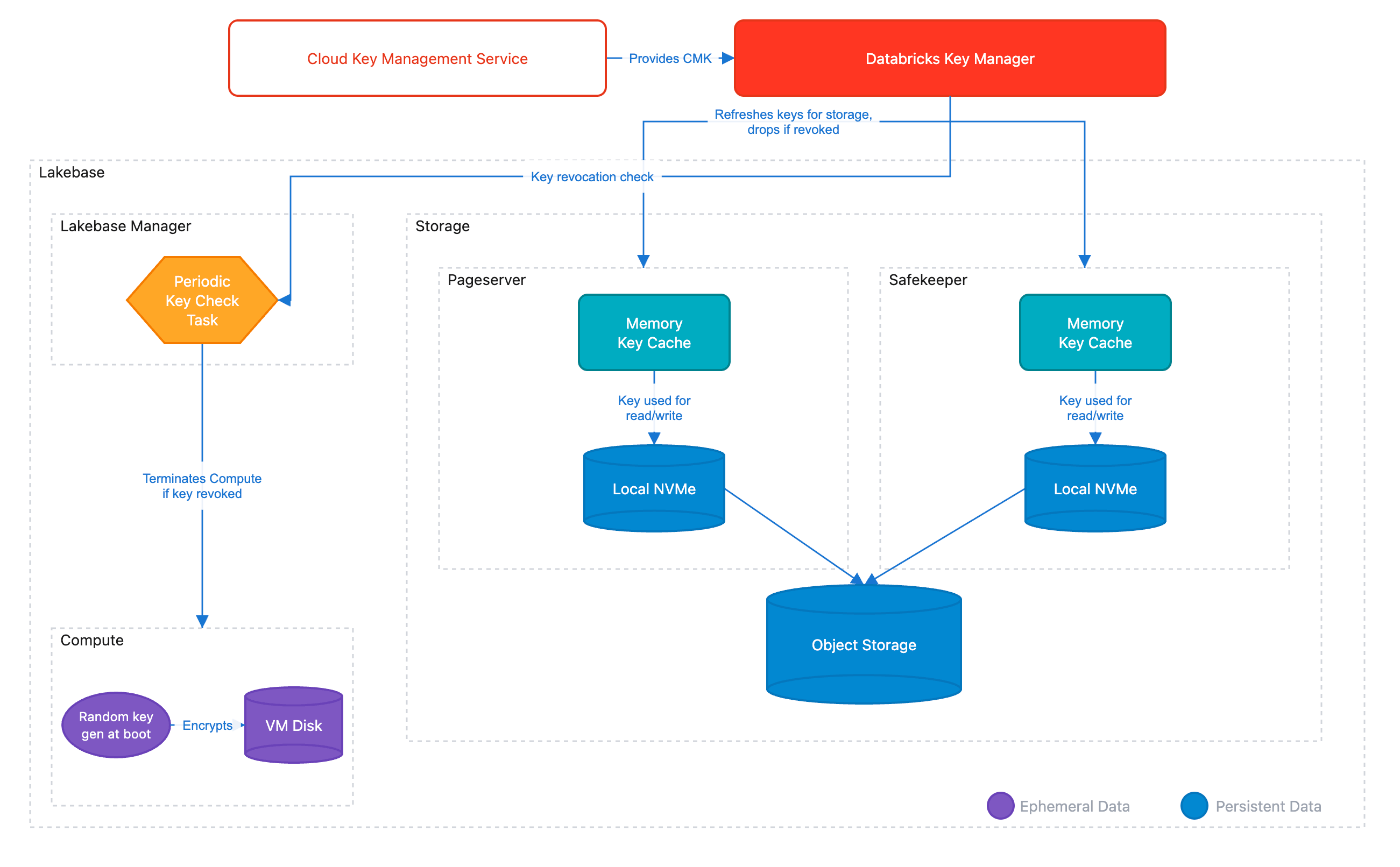

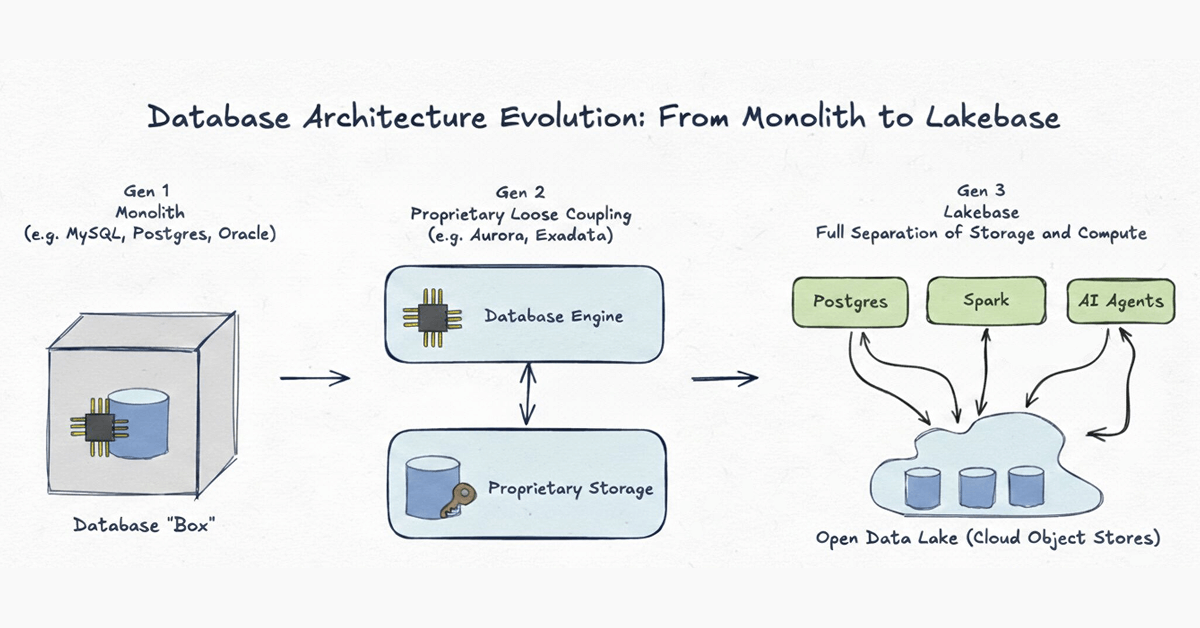

Lakebasearchitecture separates storage and compute into independent layers - a design that enables elastic scaling and serverless operations. The storage layer (Pageserver and Safekeeper) maintains long-lived, persistent data in object storage and local caches, while the compute layer runs independent Postgres instances that scale up, down, or to zero based on demand.

This separation creates a unique challenge for encryption: both layers (as well as all of their caches across the architecture) must be encrypted and remain under customer control. Lakebase CMK addresses this through a hierarchical Envelope Encryption model.

The Key Hierarchy

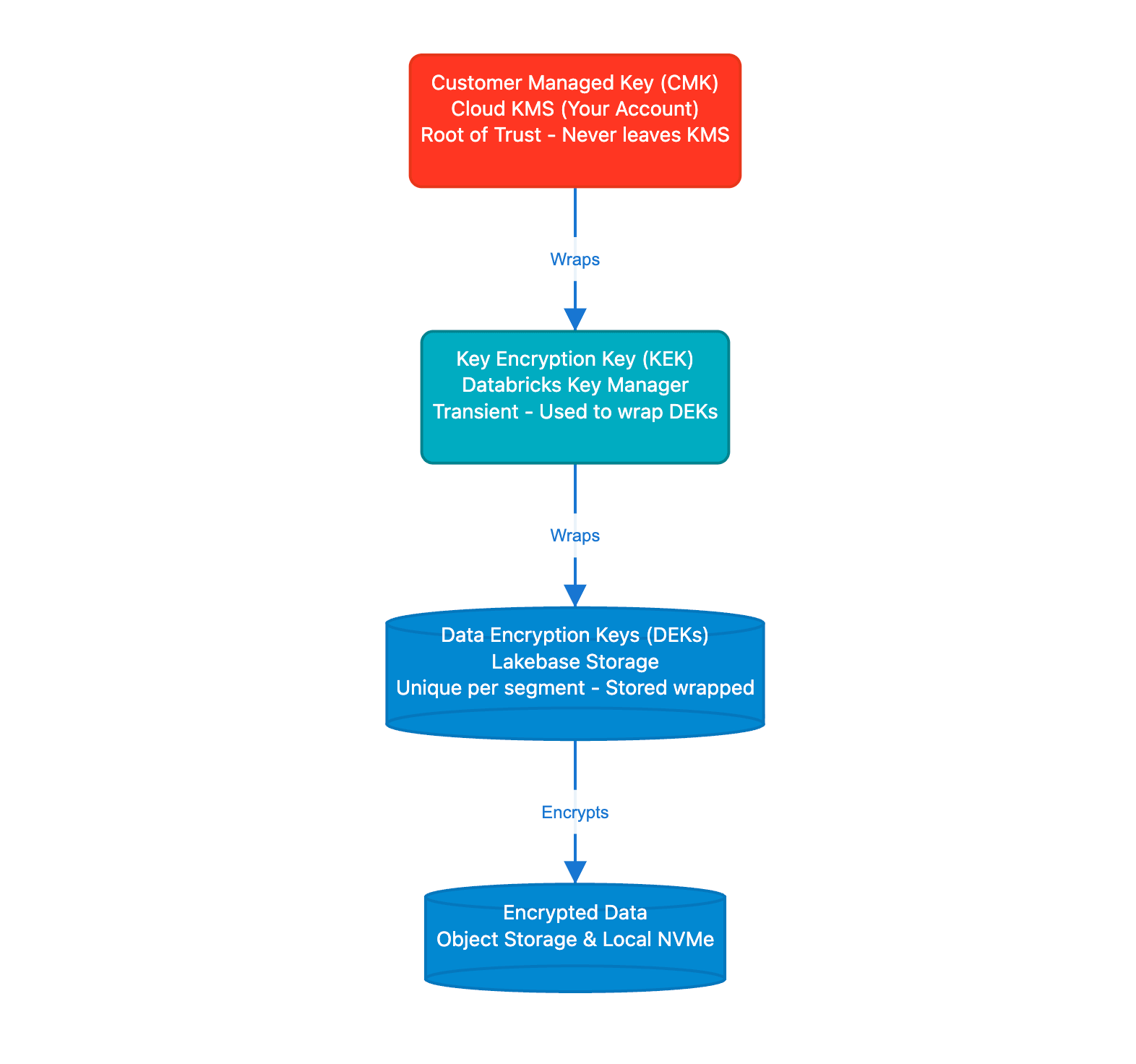

Envelope Encryption is a security model where data is encrypted with unique data keys (DEKs), and those keys are themselves encrypted by higher-level keys. This hierarchy ensures that your CMK never leaves your cloud KMS - Databricks only receives wrapped (encrypted) versions of the keys needed to decrypt data. The model also enables high-performance encryption at scale, since the KMS is only contacted to unwrap keys, not to encrypt every data block. This architecture is what enables seamless key rotation and timely revocation if ever needed.

The hierarchy consists of three levels:

1. **Customer Managed Key (CMK)**: The Root of Trust residing in your cloud KMS (AWS KMS, Azure Key Vault, or Google Cloud KMS). Databricks never sees the plaintext of this key. 2. **Key Encryption Key (KEK)**: A transient key used by the Databricks Key Manager Service to wrap data keys. 3. **Data Encryption Keys (DEKs)**: Unique keys generated for every data segment. These are stored alongside the data in an encrypted (wrapped) state.

When data needs to be accessed, Lakebase components unwrap the necessary DEK using keys obtained from your KMS. In the event of a revocation, the unwrapping will then fail, rendering the data cryptographically inaccessible. As part of this process, all ephemeral compute instances are terminated to remove access to cached data.

CMK in Practice: Storage and Compute

The practical implementation differs between storage and compute:

1. Persistence Layer (Storage)

All data segments managed by Lakebase, including WAL segments (transaction logs stored by Safekeeper) and data files, are encrypted with keys protected by your CMK. This provides defense-in-depth: data at rest is protected by encryption keys under your control, not Databricks.

2. Ephemeral Layer (Compute)

The Postgres compute VM holds ephemeral data used by the operating system and PostgresSQL - for example, performance caches, WAL artifacts, temp files etc, So it's critical that all of this data is also managed under a CMK. CMK protects this ephemeral compute data with:

- **Per Boot Keys**: Every time a Lakebase compute instance starts, it generates a unique ephemeral key.

- **Automatic Shredding**: On CMK revocation, Lakebase Manager terminates the instance, destroying ephemeral in-memory keys and rendering local disk data inaccessible.

Implementing CMK in the Lakebase Workflow

Implementation follows the standard Databricks Account to Workspace delegation model. This separation of duties ensures that Security Admins can manage keys without needing access to the data itself. Once a key is configured at the workspace level, all Lakebase projects use the CMK as part of the encryption workflow.

Step 1: Key Configuration

An Account Admin creates a Key Configuration in the Databricks Account Console. This object contains the key identifier (ARN for AWS KMS, Key Vault URL for Azure, or Key ID for Google Cloud KMS) and the IAM role or service principal that Lakebase will assume to perform Wrap and Unwrap operations.

Step 2: Workspace Binding

The configuration is then mapped to a specific Workspace. For Lakebase, this means:

- **New Projects:** All new Lakebase projects automatically inherit the workspace's CMK.

- **Isolation:** Different workspaces can use different CMKs to satisfy multi tenant or multi departmental security requirements.

Step 3: Lifecycle Management and Rotation

Lakebase supports Seamless Key Rotation. When you rotate your CMK in your cloud provider's console:

- The envelope encryption hierarchy enables seamless rotation - your CMK can be rotated in your cloud KMS without re-encrypting data or changing DEKs.

- There is zero downtime or manual re-encryption required.

Security Auditability

Because the CMK resides in your cloud account, cryptographic operations against your key are logged in your provider's audit service (AWS CloudTrail, Azure Monitor, or Google Cloud Audit Logs).

Get Started with Enhanced Data Sovereignty

If your organization requires the highest level of cryptographic control over your Postgres workloads, Lakebase CMK is now available for Enterprise tier customers.

Ready to secure your data? Contact your Databricks account team to enable Customer Managed Keys for your workspace, or visit ourtechnical documentation to review the prerequisite IAM policies and KMS configurations.

Not yet a Databricks customer? Get started with atrial.

Keep up with us

Subscribe

Contents in this story

The Architecture of Lakebase Encryption

- The Architecture of Lakebase Encryption

- The Key Hierarchy

- CMK in Practice: Storage and Compute

- Implementing CMK in the Lakebase Workflow

- Security Auditability

- Get Started with Enhanced Data Sovereignty

Recommended for you

Announcements

June 12, 2025/6 min read

#### A New Era of Databases: Lakebase

Product

February 3, 2026/5 min read

#### Databricks Lakebase is now Generally Available

Product

April 10, 2026/7 min read

#### Database Branching in Postgres: Git-Style Workflows with Databricks Lakebase

Share this post

- [](https://www.linkedin.com/shareArticle?mini=true&url=https://www.databricks.com/blog/take-control-customer-managed-keys-lakebase-postgres&summary=&source=)

- [](https://twitter.com/intent/tweet?text=https://www.databricks.com/blog/take-control-customer-managed-keys-lakebase-postgres)

- [](https://www.facebook.com/sharer/sharer.php?u=https://www.databricks.com/blog/take-control-customer-managed-keys-lakebase-postgres)

Never miss a Databricks post

Subscribe to our blog and get the latest posts delivered to your inbox

Sign up

*

Work Email

*

Country Country*

By clicking “Subscribe” I understand that I will receive Databricks communications, and I agree to Databricks processing my personal data in accordance with its Privacy Policy.

Subscribe

What's next?

Product

November 21, 2024/3 min read

#### How to present and share your Notebook insights in AI/BI Dashboards

Product

December 10, 2024/7 min read

#### Batch Inference on Fine Tuned Llama Models with Databricks Model Serving

Why Databricks

Discover

Customers

Partners

Why Databricks

Discover

Customers

Partners

Product

Databricks Platform

- Platform Overview

- Sharing

- Governance

- Artificial Intelligence

- Business Intelligence

- Database

- Data Management

- Data Warehousing

- Data Engineering

- Data Science

- Application Development

- Security

Pricing

Integrations and Data

Product

Databricks Platform

- Platform Overview

- Sharing

- Governance

- Artificial Intelligence

- Business Intelligence

- Database

- Data Management

- Data Warehousing

- Data Engineering

- Data Science

- Application Development

- Security

Pricing

Open Source

Integrations and Data

Solutions

Databricks For Industries

- Communications

- Financial Services

- Healthcare and Life Sciences

- Manufacturing

- Media and Entertainment

- Public Sector

- Retail

- View All

Cross Industry Solutions

Solutions

Databricks For Industries

- Communications

- Financial Services

- Healthcare and Life Sciences

- Manufacturing

- Media and Entertainment

- Public Sector

- Retail

- View All

Cross Industry Solutions

Data Migration

Professional Services

Solution Accelerators

Resources

Learning

Events

Blog and Podcasts

Resources

Documentation

Customer Support

Community

Learning

Events

Blog and Podcasts

About

Company

Careers

Press

About

Company

Careers

Press

Security and Trust

Databricks Inc.

160 Spear Street, 15th Floor

San Francisco, CA 94105

1-866-330-0121

- [](https://www.linkedin.com/company/databricks)

- [](https://www.facebook.com/pages/Databricks/560203607379694)

- [](https://twitter.com/databricks)

- [](https://www.databricks.com/feed)

- [](https://www.glassdoor.com/Overview/Working-at-Databricks-EI_IE954734.11,21.htm)

- [](https://www.youtube.com/@Databricks)

- [](https://www.linkedin.com/company/databricks)

- [](https://www.facebook.com/pages/Databricks/560203607379694)

- [](https://twitter.com/databricks)

- [](https://www.databricks.com/feed)

- [](https://www.glassdoor.com/Overview/Working-at-Databricks-EI_IE954734.11,21.htm)

- [](https://www.youtube.com/@Databricks)

© Databricks 2026. All rights reserved. Apache, Apache Spark, Spark, the Spark Logo, Apache Iceberg, Iceberg, and the Apache Iceberg logo are trademarks of the Apache Software Foundation.

- Privacy Notice

- |Terms of Use

- |Modern Slavery Statement

- |California Privacy

- |Your Privacy Choices

- !Image 26

We Care About Your Privacy

Databricks uses cookies and similar technologies to enhance site navigation, analyze site usage, personalize content and ads, and as further described in our Cookie Notice. To disable non-essential cookies, click “Reject All”. You can also manage your cookie settings by clicking “Manage Preferences.”

Manage Preferences

Reject All Accept All

Privacy Preference Center

Opt-Out Preference Signal Honored

Privacy Preference Center

- ### Your Privacy

- ### Strictly Necessary Cookies

- ### Performance Cookies

- ### Functional Cookies

- ### Targeting Cookies

- ### TOTHR

#### Your Privacy

When you visit any website, it may store or retrieve information on your browser, mostly in the form of cookies. This information might be about you, your preferences or your device and is mostly used to make the site work as you expect it to. The information does not usually directly identify you, but it can give you a more personalized web experience. Because we respect your right to privacy, you can choose not to allow some types of cookies. Click on the different category headings to find out more and change our default settings. However, blocking some types of cookies may impact your experience of the site and the services we are able to offer.

#### Opting out of sales, sharing, and targeted advertising

Depending on your location, you may have the right to opt out of the “sale” or “sharing” of your personal information or the processing of your personal information for purposes of online “targeted advertising.” You can opt out based on cookies and similar identifiers by disabling optional cookies here. To opt out based on other identifiers (such as your email address), submit a request in our Privacy Request Center.

#### Strictly Necessary Cookies

Always Active

These cookies are necessary for the website to function and cannot be switched off in our systems. They assist with essential site functionality such as setting your privacy preferences, logging in or filling in forms. You can set your browser to block or alert you about these cookies, but some parts of the site will no longer work.

#### Performance Cookies

- [x] Performance Cookies

These cookies allow us to count visits and traffic sources so we can measure and improve the performance of our site. They help us to know which pages are the most and least popular and see how visitors move around the site.

#### Functional Cookies

- [x] Functional Cookies

These cookies enable the website to provide enhanced functionality and personalization. They may be set by us or by third party providers whose services we have added to our pages. If you do not allow these cookies then some or all of these services may not function properly.

#### Targeting Cookies

- [x] Targeting Cookies

These cookies may be set through our site by our advertising partners. They may be used by those companies to build a profile of your interests and show you relevant advertisements on other sites. If you do not allow these cookies, you will experience less targeted advertising.

#### TOTHR

- [x] TOTHR

Cookie List

Consent Leg.Interest

- [x] checkbox label label

- [x] checkbox label label

- [x] checkbox label label

Clear

- - [x] checkbox label label

Apply Cancel

Confirm My Choices

Allow All