Backstage with Lakebase

- Databricks平台提供统一的数据、分析和AI解决方案。

- 包含数据共享、治理、人工智能、数据库等多方面服务。

- 针对不同行业如通信、金融服务等提供定制化解决方案。

Backstage with Lakebase | Databricks Blog

[](http://www.databricks.com/)

[](http://www.databricks.com/)

- Why Databricks

- * Discover

- Customers

- Partners

- Product

- * Databricks Platform

- Integrations and Data

- Pricing

- Open Source

- Solutions

- * Databricks for Industries

- Cross Industry Solutions

- Migration & Deployment

- Solution Accelerators

- Resources

- * Learning

- Events

- Blog and Podcasts

- Get Help

- Dive Deep

- About

- * Company

- Careers

- Press

- Security and Trust

- DATA + AI SUMMIT

Table of contents

- The Setup: Pointing Backstage at Lakebase

- Branching Changes the Database Development Cycle

- Point-in-Time Recovery: The Undo Button

- From Infrastructure Capability to Developer Workflow

- What Branching Enables

Table of contents

Table of contents

- The Setup: Pointing Backstage at Lakebase

- Branching Changes the Database Development Cycle

- Point-in-Time Recovery: The Undo Button

- From Infrastructure Capability to Developer Workflow

- What Branching Enables

PartnersApril 30, 2026

Backstage with Lakebase

Branching the Dev Cycle (Part 1)

by Cameron Casher and Kevin Hartman

For thirty years, the operational database and the analytical database have been two artifacts, two governance planes, two budgets, and usually two on-call rotations, connected by an ETL job someone wrote in a hurry and nobody wants to own. That split was never a design choice; it was a physics constraint. OLTP and OLAP had genuinely different storage layouts, different compute profiles, and different failure modes, so we built two platforms and wired them together after the fact.

That constraint is dissolving. When storage is shared, compute is serverless and isolated per workload, and governance lives at the catalog layer, "operational" and "analytical" stop being architectural categories and start being access patterns against the same foundation.

To test whether that's actually true in practice, we tookBackstage, Spotify's notoriously state-heavy Internal Developer Portal, ripped it off its standard Postgres database, and pointed it at DatabricksLakebase. Across this three-part series, we'll explore what happens to Deployment Cycles (Part 1), Governance (Part 2), and FinOps (Part 3) when you collapse the wall between the operational app and the data platform.

**The Setup: Pointing Backstage at Lakebase**

Lakebase exposes a serverless Postgres surface (leveraging Neon's architecture under the hood) that lives inside the Databricks Platform. Because it speaks wire-protocol Postgres, Backstage doesn't know or care that it isn't talking to RDS.

Getting it connected required pointing`app-config.yaml` at Lakebase and swapping Backstage's default in-memory search for`PgSearchEngine`. One immediate hurdle: Lakebase rejects classic Databricks Personal Access Tokens, expecting an OAuth JWT instead. The CLI provides`databricks postgres generate-database-credential` which generates a scoped, short-lived JWT for a specific endpoint, the intended approach for apps and CI. For this POC, we wrapped that command in a lightweight cron script that rewrote the`DATABRICKS_TOKEN` in our`.env` file every 50 minutes to handle the token expiration.

With auth sorted, the Knex migrations ran cleanly, and the portal was live.

**Branching Changes the Database Development Cycle**

The most underappreciated thing about a traditional Postgres isn't its feature set; it's the tempo it forces on the teams that own it.

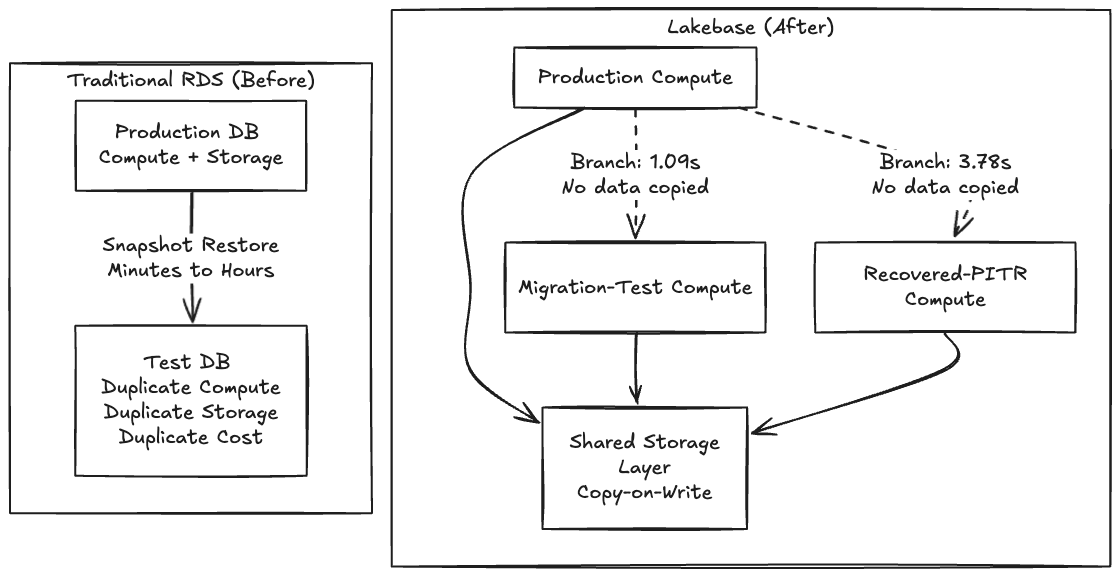

Thoughtworks has been a consistent advocate for Backstage as an IDP foundation through the Technology Radar, so along with being very familiar with the tool, we chose Backstage for this POC because its schema migrations are notoriously fragile and it seemed like a perfect opportunity to test out a Lakebase integration. On traditional RDS, testing a risky migration means waiting minutes or hours for a snapshot to restore into a parallel instance. Because making a copy is slow and expensive, teams simply don't test. They cross their fingers and run the migration in a maintenance window.

When making a copy becomes free, you stop asking "is this change safe enough to run?" and start asking "which fork of production do I want to try it on first?"

Because Lakebase separates storage from compute using acopy-on-write architecture, creating a branch doesn't copy any data, it creates a pointer to the same underlying pages, and only diverges on write. That's why the operation is instant.

Expand

One gotcha the docs don’t make obvious: the request body must nest everything inside a spec object, and you must specify`ttl`,`expire_time`, or`no_expiry`. Without that, the API returns "Expiration must be specified."

bash

$ databricks postgres create-branch \

projects/cam-db-lakebase-test \

migration-test-20260408 \

--json '{

"spec": {

"source_branch": "projects/cam-db-lakebase-test/branches/production",

"ttl": "3600s"

}

}'The control plane acknowledged it instantly. The actual data-plane clone of the ~63 MB Backstage catalog landed in**1.09 seconds**.

**Point-in-Time Recovery: The Undo Button**

Branching and Point-in-Time Recovery (PITR) are essentially the same primitive: branching is just PITR with`source_branch_time = now`. To test recovery against real deleted data, we wiped our`final_entities` table, dropping the count from 32 to 0.

We then created a recovery branch from a timestamp captured seconds before the delete:

bash

$ databricks postgres create-branch \

projects/cam-db-lakebase-test \

recovered-20260408 \

--json '{

"spec": {

"source_branch": "projects/cam-db-lakebase-test/branches/production",

"source_branch_time": "2026-04-08T22:56:02Z",

"ttl": "3600s"

}

}'The elapsed time end-to-end was**3.78 seconds**.

Verifying the data confirmed the recovered branch had all 32 entities back; production was still at zero, confirming the delete was real and the branches are fully isolated. Notably, we asked for 22:56:02Z, but Lakebase snapped to 22:55:50Z, 12 seconds earlier, snapping backward to the nearest WAL record. This WAL-level granularity is an important caveat for time-sensitive recovery workflows, but the incident cycle still ran in under a minute.

When database state becomes a cheap, forkable artifact instead of a 2 TB EBS volume, every risky operation gets a dry run, and every incident gets an undo.

**From Infrastructure Capability to Developer Workflow**

As shown above, it proves that database branching works – a 1-second clone, a 4-second recovery, and a real application that doesn't know the difference. But there's a gap between "the database can branch" and "my team branches the database as naturally as they branch code." Closing that gap is where the massive impact on developer productivity can be realized in objective gains.

We’ve spent the last several months working with development teams to answer a specific question: what happens to a team's velocity when database branching becomes invisible – when it's not a CLI command you run, but something that happens automatically as part of how you already work in your editor of choice? Work is underway on a VS Code/Cursor extension that synchronizes git and database branches automatically to prove this out -- but the tooling is secondary to what it enables.

**What Branching Enables**

Across the teams we’ve had experience with, the sprint cycle without database branching looks like this:

1. Create a git branch for feature development 2. Write mock objects for every database interface (MockUserRepository, MockOrderService...) for testing purposes 3. Write unit tests with a mocked or in-memory database (H2, SQLite) 4. Submit a PR, get it reviewed and merge code 5. Deploy to a shared staging environment 6. Discover that the schema migration doesn't work against real data or the size of data is a blocker 7. Fix schema migration, redeploy, repeat

With the availability of database branching capability, a developers feature development cycle changes:

1. Create a git branch – a Lakebase database branch can be created automatically in < 1 second 2. Your IDE connects to the real branch database immediately 3. Write code and run migrations against real live database data from the first line of code 4. Write integration tests against the real database – not database mocks 5. Multiple solutions can be experimented, since rollback of database changes is trivial 6. Push and open a PR – CI creates its own database branch, validates both code and schema, publishes a schema diff 7. The QA team members can get their own database branch for destructive testing – can be reset in seconds 8. Merge – Once merged the CD pipeline can migrate upstream environments like UAT and production and clean up all branches – code and data.

The mock objects disappear. The staging collisions disappear. The "works on my machine but breaks in staging" disappears, developers get a live database to try multiple solutions. The database changes that used to be discovered at deployment are now caught during development, where they're cheap to fix. Instant branches for Performance tests, disposable and isolated branches for Functional tests and a running branch for UAT stakeholders becomes trivial.

In our experience across multiple partner teams evaluating this workflow, mock objects account for 20-30% of test code. That's not test coverage -- it's test infrastructure. Infrastructure that diverges from production behavior over time, creating false confidence. When branching a production-equivalent database costs nothing, mocking becomes the expensive choice.

The question now is how much of your sprint are you spending on workarounds for a constraint that no longer exists.

_In Part 2 of this series, we will look at what happens to security and compliance when this operational database gets absorbed directly into Unity Catalog, Databrick’s unified governance layer._

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.

Sign up

*

Work Email

*

Country Country*

By clicking “Subscribe” I understand that I will receive Databricks communications, and I agree to Databricks processing my personal data in accordance with its Privacy Policy.

Subscribe

Why Databricks

Discover

Customers

Partners

Why Databricks

Discover

Customers

Partners

Product

Databricks Platform

- Platform Overview

- Sharing

- Governance

- Artificial Intelligence

- Business Intelligence

- Database

- Data Management

- Data Warehousing

- Data Engineering

- Data Science

- Application Development

- Security

Pricing

Integrations and Data

Product

Databricks Platform

- Platform Overview

- Sharing

- Governance

- Artificial Intelligence

- Business Intelligence

- Database

- Data Management

- Data Warehousing

- Data Engineering

- Data Science

- Application Development

- Security

Pricing

Open Source

Integrations and Data

Solutions

Databricks For Industries

- Communications

- Financial Services

- Healthcare and Life Sciences

- Manufacturing

- Media and Entertainment

- Public Sector

- Retail

- View All

Cross Industry Solutions

Solutions

Databricks For Industries

- Communications

- Financial Services

- Healthcare and Life Sciences

- Manufacturing

- Media and Entertainment

- Public Sector

- Retail

- View All

Cross Industry Solutions

Data Migration

Professional Services

Solution Accelerators

Resources

Learning

Events

Blog and Podcasts

Resources

Documentation

Customer Support

Community

Learning

Events

Blog and Podcasts

About

Company

Careers

Press

About

Company

Careers

Press

Security and Trust

Databricks Inc.

160 Spear Street, 15th Floor

San Francisco, CA 94105

1-866-330-0121

- [](https://www.linkedin.com/company/databricks)

- [](https://www.facebook.com/pages/Databricks/560203607379694)

- [](https://twitter.com/databricks)

- [](https://www.databricks.com/feed)

- [](https://www.glassdoor.com/Overview/Working-at-Databricks-EI_IE954734.11,21.htm)

- [](https://www.youtube.com/@Databricks)

- [](https://www.linkedin.com/company/databricks)

- [](https://www.facebook.com/pages/Databricks/560203607379694)

- [](https://twitter.com/databricks)

- [](https://www.databricks.com/feed)

- [](https://www.glassdoor.com/Overview/Working-at-Databricks-EI_IE954734.11,21.htm)

- [](https://www.youtube.com/@Databricks)

© Databricks 2026. All rights reserved. Apache, Apache Spark, Spark, the Spark Logo, Apache Iceberg, Iceberg, and the Apache Iceberg logo are trademarks of the Apache Software Foundation.

- Privacy Notice

- |Terms of Use

- |Modern Slavery Statement

- |California Privacy

- |Your Privacy Choices

- !Image 11

We Care About Your Privacy

Databricks uses cookies and similar technologies to enhance site navigation, analyze site usage, personalize content and ads, and as further described in our Cookie Notice. To disable non-essential cookies, click “Reject All”. You can also manage your cookie settings by clicking “Manage Preferences.”

Manage Preferences

Reject All Accept All

Privacy Preference Center

Opt-Out Preference Signal Honored

Privacy Preference Center

- ### Your Privacy

- ### Strictly Necessary Cookies

- ### Performance Cookies

- ### Functional Cookies

- ### Targeting Cookies

- ### TOTHR

#### Your Privacy

When you visit any website, it may store or retrieve information on your browser, mostly in the form of cookies. This information might be about you, your preferences or your device and is mostly used to make the site work as you expect it to. The information does not usually directly identify you, but it can give you a more personalized web experience. Because we respect your right to privacy, you can choose not to allow some types of cookies. Click on the different category headings to find out more and change our default settings. However, blocking some types of cookies may impact your experience of the site and the services we are able to offer.

#### Opting out of sales, sharing, and targeted advertising

Depending on your location, you may have the right to opt out of the “sale” or “sharing” of your personal information or the processing of your personal information for purposes of online “targeted advertising.” You can opt out based on cookies and similar identifiers by disabling optional cookies here. To opt out based on other identifiers (such as your email address), submit a request in our Privacy Request Center.

#### Strictly Necessary Cookies

Always Active

These cookies are necessary for the website to function and cannot be switched off in our systems. They assist with essential site functionality such as setting your privacy preferences, logging in or filling in forms. You can set your browser to block or alert you about these cookies, but some parts of the site will no longer work.

#### Performance Cookies

- [x] Performance Cookies

These cookies allow us to count visits and traffic sources so we can measure and improve the performance of our site. They help us to know which pages are the most and least popular and see how visitors move around the site.

#### Functional Cookies

- [x] Functional Cookies

These cookies enable the website to provide enhanced functionality and personalization. They may be set by us or by third party providers whose services we have added to our pages. If you do not allow these cookies then some or all of these services may not function properly.

#### Targeting Cookies

- [x] Targeting Cookies

These cookies may be set through our site by our advertising partners. They may be used by those companies to build a profile of your interests and show you relevant advertisements on other sites. If you do not allow these cookies, you will experience less targeted advertising.

#### TOTHR

- [x] TOTHR

Cookie List

Consent Leg.Interest

- [x] checkbox label label

- [x] checkbox label label

- [x] checkbox label label

Clear

- - [x] checkbox label label

Apply Cancel

Confirm My Choices

Allow All

问问这篇内容

回答仅基于本篇材料Skill 包

领域模板,一键产出结构化笔记论文精读包

把一篇论文 / 技术博客精读成结构化笔记:问题、方法、实验、批判、延伸阅读。

- · TL;DR(1 段)

- · 研究问题与动机

- · 方法概览

投融资雷达包

把一条融资 / 创投新闻整理成投资人视角的雷达卡:交易要点、判断、竞争格局、风险、尽调清单。

- · 交易要点(公司 / 轮次 / 金额 / 投资人 / 估值,材料未明示则写 “未披露”)

- · 投资 thesis(这家公司为什么值得关注)

- · 竞争格局与替代方案