What's the difference between AI in demo vs AI in production? 𝗚𝘂𝗮𝗿𝗱𝗿𝗮𝗶𝗹𝘀. Demos show what...

- 演示展示AI能力,生产环境验证其错误时的稳定性。

- 自适应反馈循环使AI实时自我评估与纠错。

- 生产级AI应用需实施多重保障措施确保可靠性。

结构提纲

按章节快速跳转。

- §引言

对比AI演示与生产环境的区别,引入话题。

强调从实验到企业级系统的转变需求。

详述生产级智能工作流程的四个核心策略。

思维导图

用一张图看清主题之间的关系。

查看大纲文本(无障碍 / 无 JS 友好)

- AI in Demo vs Production

- Demo vs Production

- Unpredictable Experiment

- Reliable System

- Critical Patterns

- Adaptive Feedback Loops

- Corrective Action

- Human in the Loop (HITL)

- Emergency Stop

金句 / Highlights

值得收藏与分享的关键句。

Demos show what AI can do. Production systems prove they won't break when things go wrong.

- Adaptive Feedback Loops: The agent evaluates its own output for hallucinations or policy violations.

Get your free copy here: [stack-ai.com/whitepaper/wea](https://t.co/e2nOYX4cg3)

𝗚𝘂𝗮𝗿𝗱𝗿𝗮𝗶𝗹𝘀.

Demos show what AI 𝘤𝘢𝘯 do.

Production systems prove they won't break when things go wrong.

To move from an unpredictable experiment to a reliable enterprise system, you need guardrail https://t.co/qwXfjYSmGS" / X

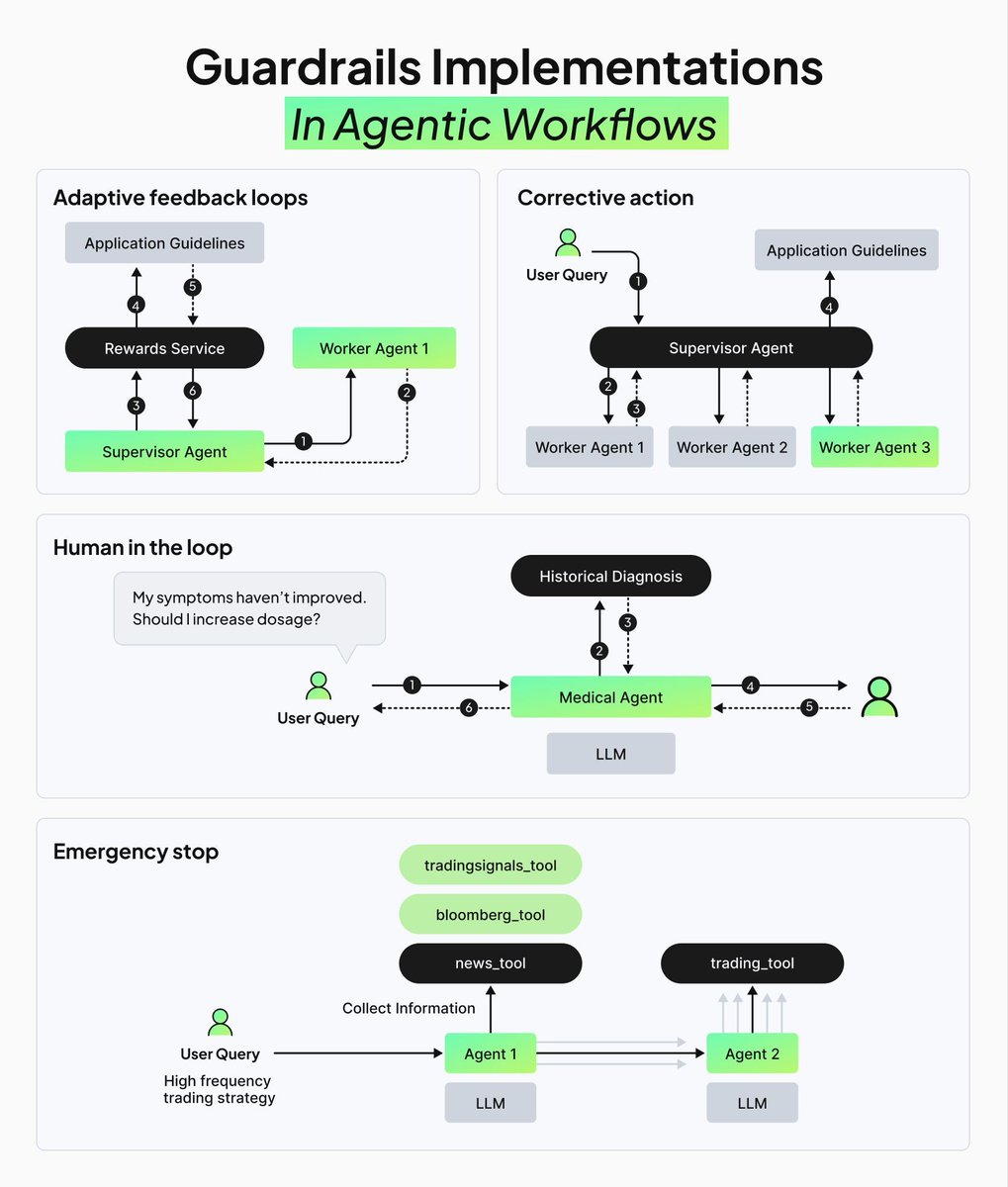

What's the difference between AI in demo vs AI in production? 𝗚𝘂𝗮𝗿𝗱𝗿𝗮𝗶𝗹𝘀. Demos show what AI 𝘤𝘢𝘯 do. Production systems prove they won't break when things go wrong. To move from an unpredictable experiment to a reliable enterprise system, you need guardrail implementations. Here are the 4 critical patterns in production-grade agentic workflows: - 𝗔𝗱𝗮𝗽𝘁𝗶𝘃𝗲 𝗙𝗲𝗲𝗱𝗯𝗮𝗰𝗸 𝗟𝗼𝗼𝗽𝘀: The agent evaluates its own output for hallucinations or policy violations. If it fails, it scores the response, generates feedback, and self-corrects in real-time. - 𝗖𝗼𝗿𝗿𝗲𝗰𝘁𝗶𝘃𝗲 𝗔𝗰𝘁𝗶𝗼𝗻: When an evaluation fails, the system doesn't stop. It triggers automated retries with safer prompts or tighter retrieval filters to ensure the second attempt hits the mark. - 𝗛𝘂𝗺𝗮𝗻 𝗶𝗻 𝘁𝗵𝗲 𝗟𝗼𝗼𝗽 (𝗛𝗜𝗧𝗟): For high-stakes decisions, the agent pauses and routes the action to a human reviewer. Once approved, the workflow resumes. This balances AI speed with human accountability. - 𝗘𝗺𝗲𝗿𝗴𝗲𝗻𝗰𝘆 𝗦𝘁𝗼𝗽: If risk thresholds are crossed or the system detects a severe anomaly, it kills the process rather than guessing. These guardrails give you AI agents that can handle compliance checks, RFP drafting, and claims triage without creating new liabilities. We just released a complete guide with

covering these patterns and the full architecture for reliable RAG agents. Get your free copy here: stack-ai.com/whitepaper/wea

问问这篇内容

回答仅基于本篇材料Skill 包

领域模板,一键产出结构化笔记投融资雷达包

把一条融资 / 创投新闻整理成投资人视角的雷达卡:交易要点、判断、竞争格局、风险、尽调清单。

- · 交易要点(公司 / 轮次 / 金额 / 投资人 / 估值,材料未明示则写 “未披露”)

- · 投资 thesis(这家公司为什么值得关注)

- · 竞争格局与替代方案