Google DeepMind(@GoogleDeepMind)

This progress allow us to rethink global compute: 🔘 We successfully trained a 12B @GoogleGemma mode...

5.0Score

AI 深度提炼

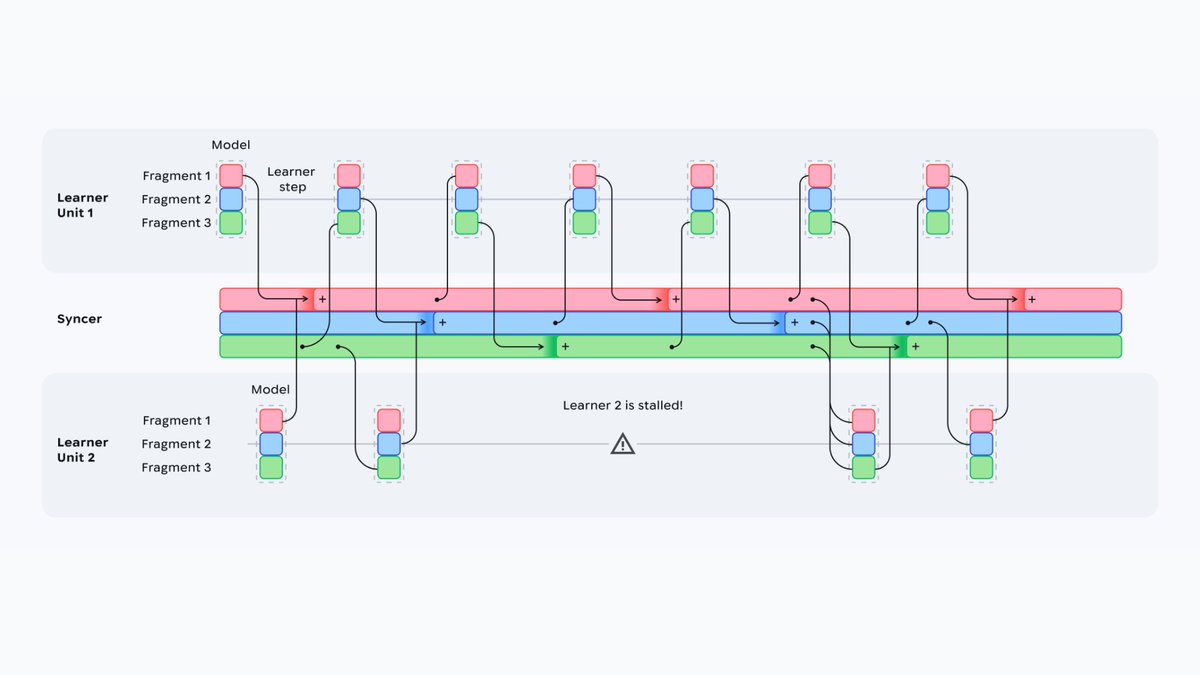

🔘 We successfully trained a 12B @GoogleGemma model across four US regions using low-bandwidth networks 🔘 We showed we can mix different hardware generations, such as TPU6e and TPUv5p, without slowing down performance during https://t.co/g6dwehJguM" / X

Post

Conversation

This progress allow us to rethink global compute: !Image 2: 🔘 We successfully trained a 12B

model across four US regions using low-bandwidth networks !Image 3: 🔘 We showed we can mix different hardware generations, such as TPU6e and TPUv5p, without slowing down performance during training