Excited to share our work on production-ready W4A8 inference, now integrated in vLLM! By combining 4...

- 结合4-bit权重和8-bit激活实现内存与计算平衡。

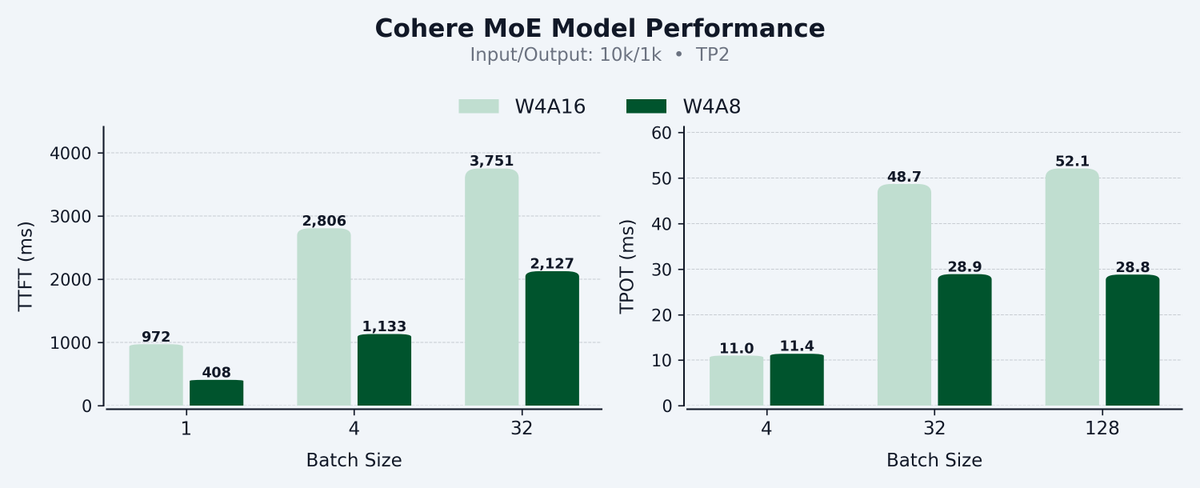

- 相比W4A16,TTFT提升58%,TPOT提升45%。

- 优化方案已集成至开源项目vLLM。

Cohere on X: "Excited to share our work on production-ready W4A8 inference, now integrated in vLLM! By combining 4-bit weights (low memory) with 8-bit activations (high compute), we hit the sweet spot for both decoding and prefill — up to 58% faster TTFT and 45% faster TPOT vs W4A16 on Hopper. https://t.co/M37wT5KS8Z" / X

Don’t miss what’s happening

People on X are the first to know.

Post

See new posts

Conversation

Excited to share our work on production-ready W4A8 inference, now integrated in vLLM! By combining 4-bit weights (low memory) with 8-bit activations (high compute), we hit the sweet spot for both decoding and prefill — up to 58% faster TTFT and 45% faster TPOT vs W4A16 on Hopper.

·

3

14

99

35

New to X?

Sign up now to get your own personalized timeline!

Sign up with Apple

By signing up, you agree to the Terms of Service and Privacy Policy, including Cookie Use.

Relevant people

-  Cohere @cohere Follow Click to Follow cohere Empowering enterprises with private, powerful AI. Join us: http://cohere.com/careers

Trending now

What’s happening

Sports · Trending

#BURMCI

Trending in United States

Grapefruit

Politics · Trending

Hung Cao

Trending with Phelan, Secretary of the Navy

Technology · Trending

Storage Wars

Trending with Darrell Sheets

|

|

|

|

|

More

© 2026 X Corp.