Are LLM agents good at join order optimization?

- LLM 在复杂查询优化中表现出一定潜力。

- 当前 LLM 方法在性能和准确性上仍存在局限。

- 结合传统优化器可能是更优解。

Are LLM agents good at join order optimization? | Databricks Blog

[](http://www.databricks.com/)

[](http://www.databricks.com/)

- Why Databricks

- * Discover

- Customers

- Partners

- Product

- * Databricks Platform

- Integrations and Data

- Pricing

- Open Source

- Solutions

- * Databricks for Industries

- Cross Industry Solutions

- Migration & Deployment

- Solution Accelerators

- Resources

- * Learning

- Events

- Blog and Podcasts

- Get Help

- Dive Deep

- About

- * Company

- Careers

- Press

- Security and Trust

- DATA + AI SUMMIT

Table of contents

Table of contents

Table of contents

AIApril 22, 2026

Are LLM agents good at join order optimization?

by Eric Liang, Ryan Marcus, Sid Taneja and Yuhao Zhang

Summary

- What it is: We explore applying frontier Large Language Model (LLM) agents to the classic database problem of SQL join order optimization.

- The Challenge: Traditional query optimizers often struggle with Join Ordering, where the number of possible plans grows exponentially with the number of tables, often leading to poor performance due to misestimated cardinality. LLM agents address this by acting like a data-driven DBA, reasoning through actual runtime statistics and semantic context that automated heuristics often miss.

- Results & Outcomes: In experimental benchmarks, the prototype agent improved upon the Databricks optimizer in 80% of cases, improving query latency by a factor of 1.3x overall.

Introduction

In the Databricks intelligence platform, we regularly explore and use new AI techniques to improve engine performance and simplify the user experience. Here we present experimental results on applying frontier models to one of the oldest database challenges: join ordering.

_This blog is part of a research collaboration with UPenn._

The Problem: Join Ordering

Since their inception, query optimizers in relational databases have struggled to find good join orderings for SQL queries. To illustrate the difficulty of join ordering, consider the following query:

**How many movies were produced by Sony and starred Scarlett Johansson?**

Suppose we want to execute this query over the following schema:

| **Table name** | **Table columns** | | --- | --- | | Actor | `actorID`, `actorName`, `actorDOB`, … | | Company | `companyID`, `companyName`, … | | Stars | `actorID`, `movieID` | | Produces | `companyID`, `movieID` | | Movie | `movieID`, `movieName`, `movieYear` , … |

The Actor, Company, and Movie entity tables are connected via the Produces and Stars relationship tables (e.g., via foreign keys). A version of this query in SQL might be:

sql

SELECT DISTINCT m.moveName

FROM Actor a, Company C, Stars s, Produces p, Movie m

WHERE a.actorName = 'Scarlett Johansson'

AND c.companyName = 'Sony'

AND s.actorID = a.actorID AND s.movieID = m.movieID

AND p.companyID = c.companyID AND p.movieID = m.movieID_Logically,_ we want to perform a simple join operation: _Actor ⋈ Stars ⋈ Movie ⋈ Produces ⋈ Company._ But _physically_, since each of these joins are commutative and associative, we have a lot of options. The query optimizer could choose to:

1. First find all movies starring Scarlett Johansson, then filter out for just the movies produced by Sony, 2. First find all movies produced by Sony, then filter out those movies starring Scarlett Johansson, 3. In parallel, compute Sony movies and Scarlett Johansson movies, then take the intersection.

Which plan is optimal depends on the data: if Scarlett Johansson has starred in significantly fewer movies than Sony has produced, the first plan might be optimal. Unfortunately, estimating this quantity is as difficult as executing the query itself (in general). Even worse, there are normally far more than 3 plans to choose from, as the number of possible plans grows exponentially with the number of tables — and analytics queries regularly join 20-30 different tables.

**How does join ordering work today?** Traditional query optimizers solve this problem with three components: a cardinality estimator designed to quickly guess the size of subqueries (e.g., to guess how many movies Sony has produced), a cost model to compare different potential plans, and a search procedure that navigates the exponentially-large space. Cardinality estimation is especially difficult, and has led to a wide range of research seeking to improve estimation accuracy using a wide range of approaches [A].

All of these solutions add significant complexity to a query optimizer, and require significant engineering effort to integrate, maintain, and productionize. **But what if LLM-powered agents, with their abilities to adapt to new domains with prompting, hold the key to solving this decades-old problem?**

Agentic join ordering

When query optimizers select a bad join ordering1, human experts can fix it by diagnosing the issue (often a misestimated cardinality), and instructing the query optimizer to choose a different ordering. This process often requires multiple rounds of testing (e.g., executing the query) and manually inspecting the intermediary results.

Query optimizers typically need to pick join orders in a few hundred milliseconds, so integrating an LLM into the hot path of the query optimizer, while potentially promising, is not possible today. But, the iterative and manual process of optimizing the join order for a query, which might take a human expert multiple hours, could potentially be automated with an LLM agent! This agent tries to automate that manual tuning process.

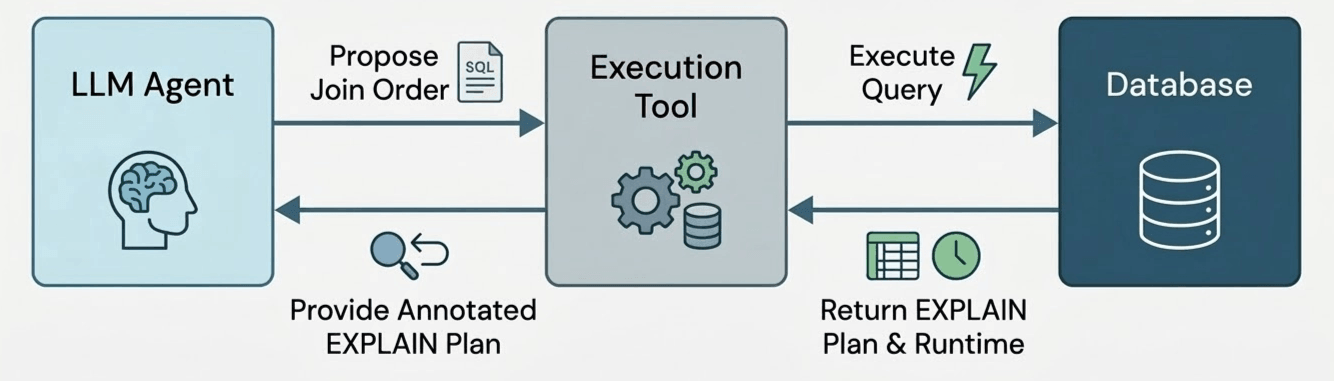

To test this, we developed **a prototype query optimization agent.** The agent has access to a single tool, which executes a potential join order for a query and returns the runtime of the join order (with a timeout of the original query’s runtime) and the size of each computed subplan (e.g., the EXPLAIN EXTENDED plan).

We let the agent run for 50 iterations, allowing the agent to freely try out different join orders. The agent is free to use these 50 iterations to test out promising plans (“exploitation”), or to explore risky-but-informative alternatives (“exploration”). Afterwards, we collect the best performing join order tested by the agent, which becomes our final result. But how do we know the agent picked a valid join order? To ensure correctness, each tool call generates a join ordering using structured model outputs, which forces the model’s output to match a grammar we specify to only admit valid join reorderings. Note that this differs from prior work [B] that asks the LLM to pick a join order instantly in the “hot path” of the query optimizer; instead, the LLM gets to act like an offline experimenter that tries many candidate plans and learns from the observed outcomes – just like a human tuning a join order by hand!.

To evaluate our agent in DBR, we used theJoin Order Benchmark (JOB), a set of queries that were designed to be difficult to optimize. Since the dataset used by JOB, the IMDb dataset, is only around 2GB (and therefore Databricks could process even poor join orderings fairly quickly), we scaled up the dataset by duplicating each row 10 times [C].

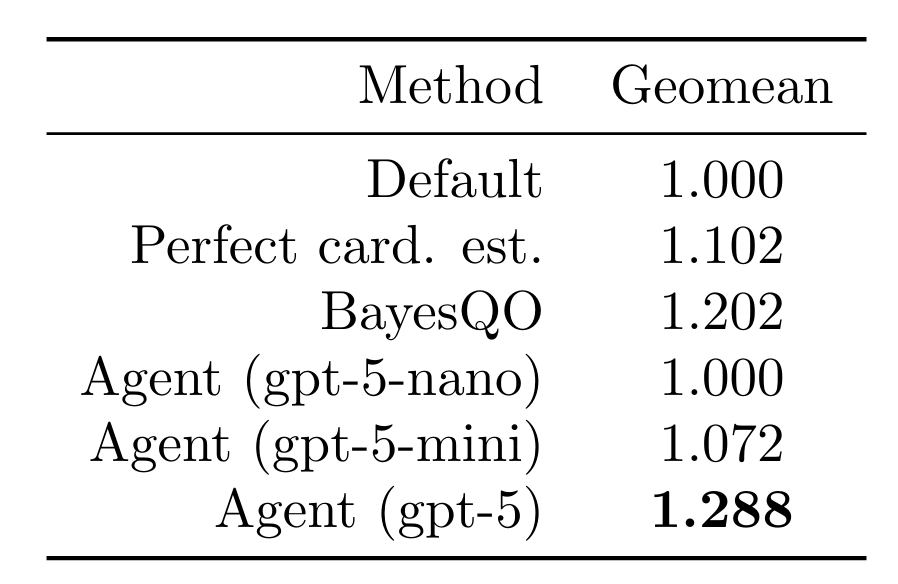

We let our agent test 15 join orders (rollouts) per query for all 113 queries in the join order benchmark. We report results on the best join order found for each query. When using a frontier model, the agent was able to improve query latency by a factor of 1.288 (geomean). This outperforms using perfect cardinality estimates (intractable in practice), smaller models, and the recentBayesQO offline optimizer (although BayesQO was designed for PostgreSQL, not Databricks).

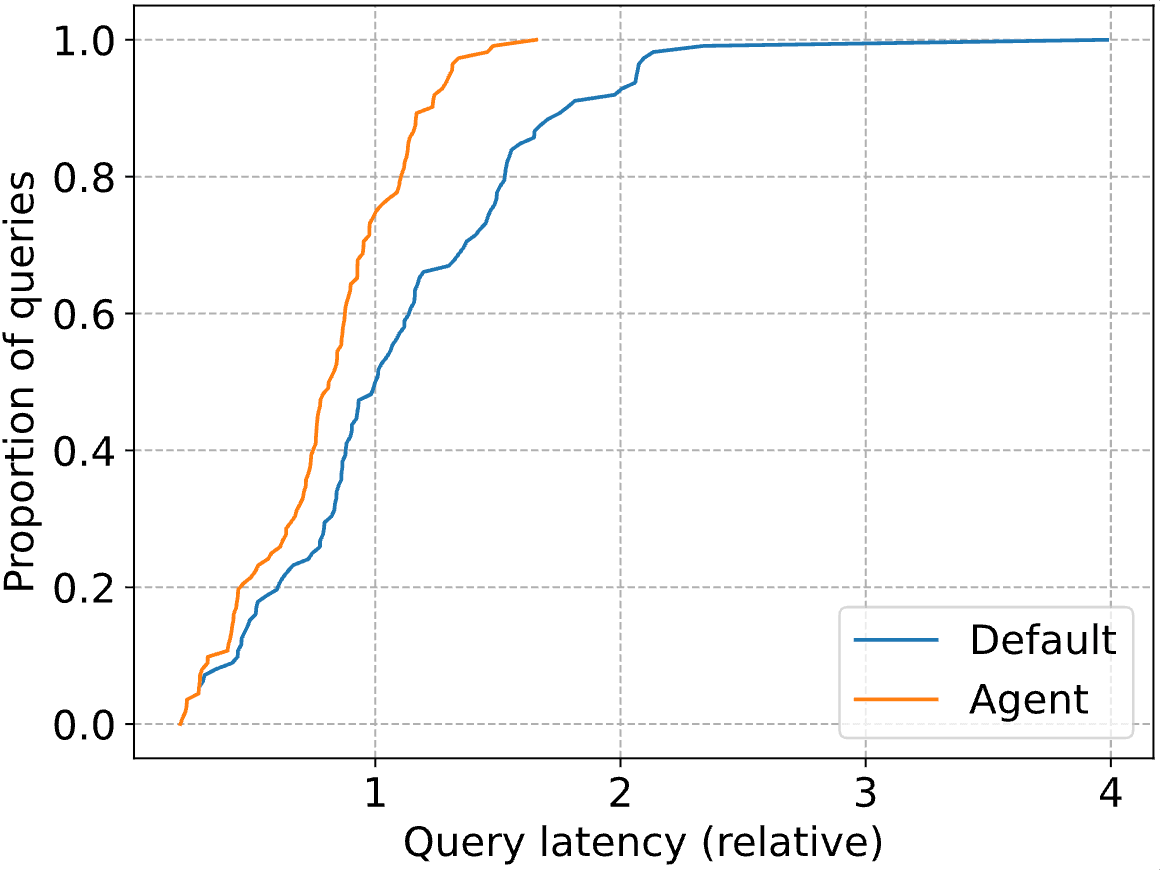

The real impressive gains are in the tail of the distribution, with the P90 query latency dropping by 41%. Below, we plot the entire CDF for both the standard Databricks optimizer (”Default”) and our agent (”Agent). Query latencies are normalized to the median latency of the Databricks optimizer (i.e., at 1, the blue line reaches a proportion of 0.5).

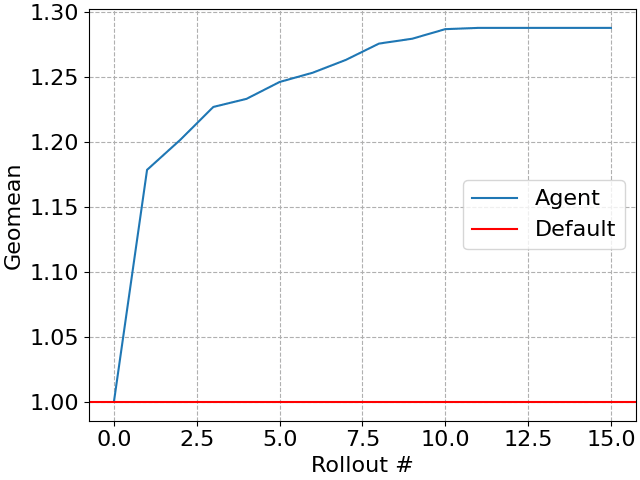

Our agent progressively improves the workload with each tested plan (sometimes called a _rollout_), creating a simple “anytime algorithm” where larger time budgets can be translated into further query performance. Of course, eventually query performance will stop improving.

One of the largest improvements our agent found was inquery 5b, a simple 5-way join which looks for American production companies that released a post-2010 movie on VHS with a note referencing 1994. The Databricks optimizer focused first on finding American VHS production companies (which is indeed selective, producing only 12 rows). The agent finds a plan that first looks for VHS releases referencing 1994, which turns out to be significantly faster. This is because the query uses `LIKE` predicates to identify VHS releases, which are exceptionally difficult for cardinality estimators.

Our prototype demonstrates the promise of agentic systems autonomously repairing and improving database queries. This exercise raised several questions about agent design in our minds:

1. **What tools should we give the agent?** In our current approach, the agent can execute candidate join orders. Why not let the agent issue specific cardinality queries (e.g., compute the size of a particular subplan), or queries to test certain assumptions about the data (e.g., to determine that there were no DVD releases prior to 1995). 2. **When should this agentic optimization be triggered?** Surely, a user can flag a problematic query manually for intervention. But could we also proactively apply this optimization to regularly-running queries? How can we determine if a query has “potential” for optimization? 3. **Can we automatically understand improvements?** When the agent finds a better join order than the one found by the default optimizer, this join order can be viewed as a proof that the default optimizer is choosing a suboptimal order. If the agent corrects a systematic error in the underlying optimizer, can we discover this and use it to improve the optimizer?

Of course, we are not the only ones thinking about the potential of LLMs for query optimization [D]. At Databricks, we are excited about the possibility of harnessing the generalizability of LLMs to improve data systems themselves.

If you are interested in this topic, see also our followup UCB blog on "How do LLM agents think through SQL join orders?".

Join Us

As we look ahead, we’re excited to keep pushing the boundaries of how AI can shape database optimizations. If you’re passionate about building the next generation of database engines, join us!

1 Databricks use techniques like runtime filters to mitigate the impact of poor join orders. The results presented here include those techniques.

Notes

A. Techniques for cardinality estimation have included, for example,adaptive feedback,deep learning,distribution modeling,database theory,learning theory, andfactor decompositions. Prior work has also attempted to entirely replace the traditional query optimizer architecture with deepreinforcementlearning,multi-armed bandits,Bayesian optimization, ormore advanced join algorithms.

B. RAG-based approaches, for example, have been used to build “LLM in the hot path” systems.

C. While crude, this approach has been used in prior work.

D. Other researchers have proposedRAG-based query repair systems,LLM-powered query rewrite systems, and evenentire database systemssynthesized by LLMs.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.

Sign up

*

Work Email

*

Country Country*

By clicking “Subscribe” I understand that I will receive Databricks communications, and I agree to Databricks processing my personal data in accordance with its Privacy Policy.

Subscribe

What's next?

→

December 9, 2025/12 min read

#### Introducing OfficeQA: A Benchmark for End-to-End Grounded Reasoning

→

March 5, 2026/3 min read

#### Meet KARL: A Faster Agent for Enterprise Knowledge, powered by custom RL

Why Databricks

Discover

Customers

Partners

Why Databricks

Discover

Customers

Partners

Product

Databricks Platform

- Platform Overview

- Sharing

- Governance

- Artificial Intelligence

- Business Intelligence

- Database

- Data Management

- Data Warehousing

- Data Engineering

- Data Science

- Application Development

- Security

Pricing

Integrations and Data

Product

Databricks Platform

- Platform Overview

- Sharing

- Governance

- Artificial Intelligence

- Business Intelligence

- Database

- Data Management

- Data Warehousing

- Data Engineering

- Data Science

- Application Development

- Security

Pricing

Open Source

Integrations and Data

Solutions

Databricks For Industries

- Communications

- Financial Services

- Healthcare and Life Sciences

- Manufacturing

- Media and Entertainment

- Public Sector

- Retail

- View All

Cross Industry Solutions

Solutions

Databricks For Industries

- Communications

- Financial Services

- Healthcare and Life Sciences

- Manufacturing

- Media and Entertainment

- Public Sector

- Retail

- View All

Cross Industry Solutions

Data Migration

Professional Services

Solution Accelerators

Resources

Learning

Events

Blog and Podcasts

Resources

Documentation

Customer Support

Community

Learning

Events

Blog and Podcasts

About

Company

Careers

Press

About

Company

Careers

Press

Security and Trust

Databricks Inc.

160 Spear Street, 15th Floor

San Francisco, CA 94105

1-866-330-0121

- [](https://www.linkedin.com/company/databricks)

- [](https://www.facebook.com/pages/Databricks/560203607379694)

- [](https://twitter.com/databricks)

- [](https://www.databricks.com/feed)

- [](https://www.glassdoor.com/Overview/Working-at-Databricks-EI_IE954734.11,21.htm)

- [](https://www.youtube.com/@Databricks)

- [](https://www.linkedin.com/company/databricks)

- [](https://www.facebook.com/pages/Databricks/560203607379694)

- [](https://twitter.com/databricks)

- [](https://www.databricks.com/feed)

- [](https://www.glassdoor.com/Overview/Working-at-Databricks-EI_IE954734.11,21.htm)

- [](https://www.youtube.com/@Databricks)

© Databricks 2026. All rights reserved. Apache, Apache Spark, Spark, the Spark Logo, Apache Iceberg, Iceberg, and the Apache Iceberg logo are trademarks of the Apache Software Foundation.

- Privacy Notice

- |Terms of Use

- |Modern Slavery Statement

- |California Privacy

- |Your Privacy Choices

- !Image 18

We Care About Your Privacy

Databricks uses cookies and similar technologies to enhance site navigation, analyze site usage, personalize content and ads, and as further described in our Cookie Notice. To disable non-essential cookies, click “Reject All”. You can also manage your cookie settings by clicking “Manage Preferences.”

Manage Preferences

Reject All Accept All

Privacy Preference Center

Opt-Out Preference Signal Honored

Privacy Preference Center

- ### Your Privacy

- ### Strictly Necessary Cookies

- ### Performance Cookies

- ### Functional Cookies

- ### Targeting Cookies

- ### TOTHR

#### Your Privacy

When you visit any website, it may store or retrieve information on your browser, mostly in the form of cookies. This information might be about you, your preferences or your device and is mostly used to make the site work as you expect it to. The information does not usually directly identify you, but it can give you a more personalized web experience. Because we respect your right to privacy, you can choose not to allow some types of cookies. Click on the different category headings to find out more and change our default settings. However, blocking some types of cookies may impact your experience of the site and the services we are able to offer.

#### Opting out of sales, sharing, and targeted advertising

Depending on your location, you may have the right to opt out of the “sale” or “sharing” of your personal information or the processing of your personal information for purposes of online “targeted advertising.” You can opt out based on cookies and similar identifiers by disabling optional cookies here. To opt out based on other identifiers (such as your email address), submit a request in our Privacy Request Center.

#### Strictly Necessary Cookies

Always Active

These cookies are necessary for the website to function and cannot be switched off in our systems. They assist with essential site functionality such as setting your privacy preferences, logging in or filling in forms. You can set your browser to block or alert you about these cookies, but some parts of the site will no longer work.

#### Performance Cookies

- [x] Performance Cookies

These cookies allow us to count visits and traffic sources so we can measure and improve the performance of our site. They help us to know which pages are the most and least popular and see how visitors move around the site.

#### Functional Cookies

- [x] Functional Cookies

These cookies enable the website to provide enhanced functionality and personalization. They may be set by us or by third party providers whose services we have added to our pages. If you do not allow these cookies then some or all of these services may not function properly.

#### Targeting Cookies

- [x] Targeting Cookies

These cookies may be set through our site by our advertising partners. They may be used by those companies to build a profile of your interests and show you relevant advertisements on other sites. If you do not allow these cookies, you will experience less targeted advertising.

#### TOTHR

- [x] TOTHR

Cookie List

Consent Leg.Interest

- [x] checkbox label label

- [x] checkbox label label

- [x] checkbox label label

Clear

- - [x] checkbox label label

Apply Cancel

Confirm My Choices

Allow All