From Security Blocked to Prod Ready: ClickHouse on Docker Hardened Images

- Docker Hardened Images提供增强的安全性,适用于生产环境的ClickHouse部署。

- 文章未深入技术细节,主要为宣传性质,提及Docker产品系列对AI及开发者资源的支持。

- 缺乏具体实践、案例分析或技术原理深入讲解。

结构提纲

按章节快速跳转。

- §引言

引入ClickHouse on Docker Hardened Images的话题,概述从安全受限到生产就绪的转变。

列举Docker一系列产品,包括针对AI开发、容器化应用、云构建加速等解决方案。

思维导图

用一张图看清主题之间的关系。

查看大纲文本(无障碍 / 无 JS 友好)

- Docker Solutions

- AI Development

- Model Runner

- Container Security

- Hardened Images

- Developer Resources

- Compose for AI Agents

- Testcontainers

金句 / Highlights

值得收藏与分享的关键句。

Docker Brings Compose to the Agent Era: Building AI Agents is Now Easy

Introducing Docker Model Runner A faster, simpler way to run and test AI models locally

Docker Hardened Images New Ship with secure, enterprise-ready images

ClickHouse on Docker Hardened Images: From Blocked to Prod Ready | Docker

Insights on the state of AI agents from 800+ builders and leaders. Download your copy

✕

[](http://www.docker.com/)

- AI

AI

- Docker for AI Simplifying Agent Development

- Docker MCP Catalog and Toolkit Connect and manage MCP tools

- Docker Model Runner Local-first LLM inference made easy

- Docker Sandboxes New Isolated environments for coding agents

More resources for developers

Products

- Docker Hardened Images New Ship with secure, enterprise-ready images

- Docker Desktop Containerize your applications

- Docker Hub Discover and share container images

- Docker Scout Simplify the software supply chain

- Docker Build Cloud Speed up your image builds

- Testcontainers Desktop Local testing with real dependencies

- Testcontainers Cloud Test without limits in the cloud

- Docker MCP Catalog and Toolkit New Connect and manage MCP tools

- Docker Offload Break free of local constraints

- Developers

Developers

- Documentation Find guides for Docker products

- Getting Started Learn the Docker basics

- Resources Search a library of helpful materials

- Training Skill up your Docker knowledge

- Extensions SDK Create and share your own extensions

- Community Connect with other Docker developers

- Open Source Explore open source projects

- Preview Program Help shape the future of Docker

- Customer Stories Get inspired with customer stories

More resources for developers

Company

- About Us Let us introduce ourselves

- What is a Container? Learn about containerization

- Why Docker Discover what makes us different

- Trust Find our customer trust resources

- Partners Become a Docker partner

- Customer Success Learn how you can succeed with Docker

- Events Attend live and virtual meet ups

- Docker Store Gear up with exclusive SWAG

- Careers Apply to join our team

- Contact Us We’d love to hear from you

Search

Toggle menu

From Security Blocked to Prod Ready: ClickHouse on Docker Hardened Images

Posted Apr 30, 2026

Ajeet Singh Raina and Siddhant Agarwal

In November 2025, a team self-hosting Langfuse, an open-source LLM observability platform, on Kubernetes uploaded their ClickHouse image to AWS ECR as part of their production preparation. They found that the pipeline scanner had returned three critical vulnerabilities – not in ClickHouse, but in the base image. Their security team saw the findings and blocked the deployment before it ever reached production.

“_Our security team is not allowing us to take it to production. Please suggest alternatives._“

vinaygoel586

GitHub Issue #286, November 28, 2025

If you’ve shipped containers into an enterprise environment recently, this situation will sound familiar. A perfectly functional deployment gets blocked not because something is broken, but because a scanner found CVEs in packages the application never even touches. A day goes into investigating the findings, a risk exception gets written up, and the security team rejects it anyway, because the vulnerabilities are technically real even if they’re practically irrelevant to your workload.

This post is about how Docker Hardened Images (DHI) gets you unstuck, when a security team blocks the deployment of a container that has CVEs. In this case we will specifically look at the image for ClickHouse, one of the most widely pulled database images on Docker Hub.

A Quick Word on ClickHouse

ClickHouse is an open-source columnar database built for analytical workloads at scale. It is capable of querying billions of rows and returning results in milliseconds in a way that traditional row-oriented databases simply can’t match. Companies such as Cloudflare, Uber, and Spotify all run it in production. With over 100 million pulls from Docker Hub, it has become the default infrastructure choice for teams that need serious analytics throughput. The image’s default security posture, though, was designed with developer ease-of-use in mind rather than the hardening that enterprise production environments demand and that gap is where the trouble starts.

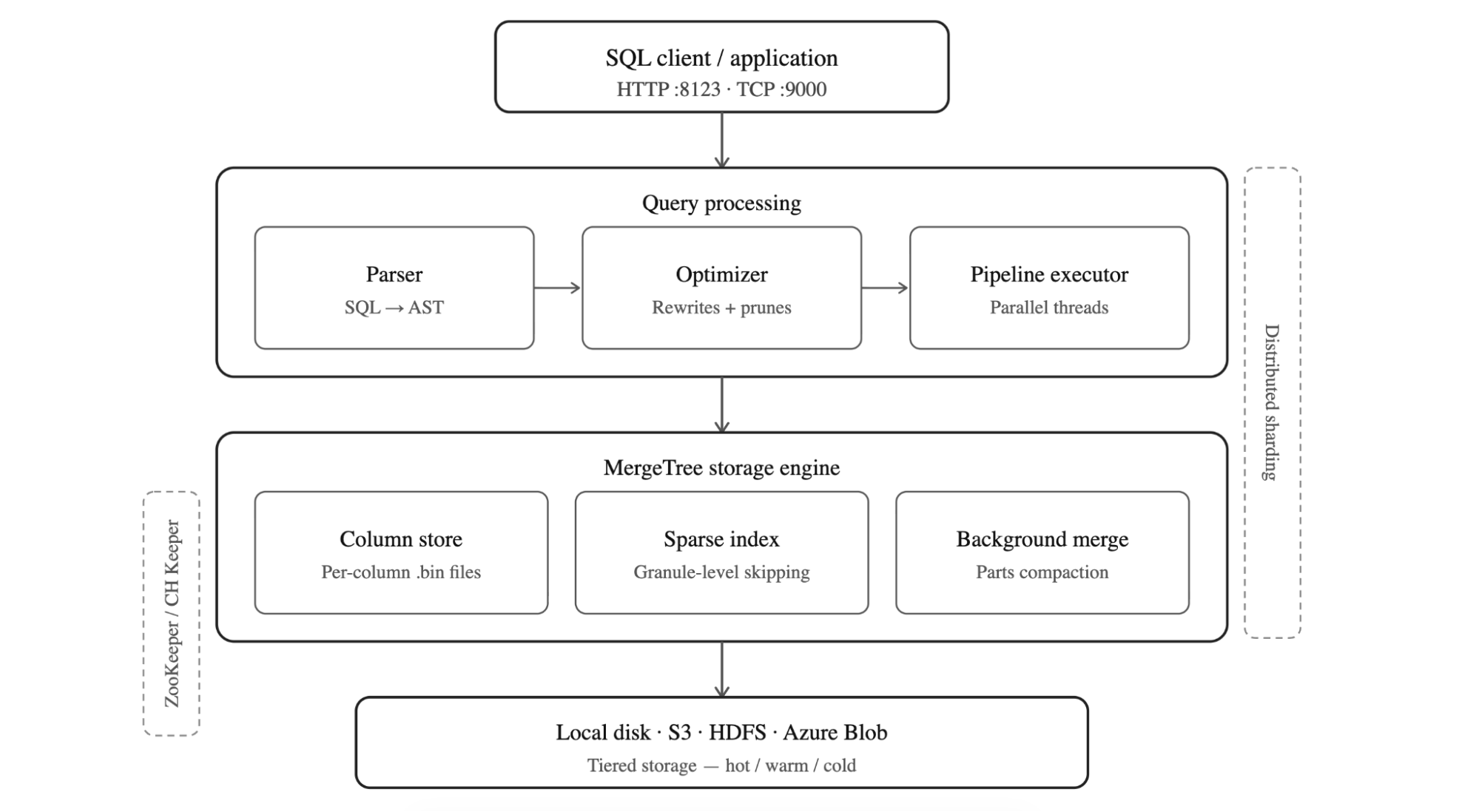

_Figure: The layered architecture of ClickHouse_

How ClickHouse is Structured

ClickHouse follows a layered architecture. It is designed for analytical speed at scale. SQL queries arrive over HTTP (port 8123) or TCP (port 9000), then pass through the optimizer which parses into an abstract syntax tree and prunes it before the pipeline executor picks it up and hands the work off to parallel threads. Beneath the query layer sits the MergeTree storage engine, the heart of ClickHouse which stores data in columnar `.bin` files. It uses a sparse primary index to skip irrelevant granules without reading entire columns, and runs background merge processes to compact parts and maintain query performance over time.

At the bottom, storage is pluggable: local disk, S3, HDFS, or Azure Blob, with tiered hot/warm/cold policies to balance cost and latency. In distributed deployments, ClickHouse Keeper (or ZooKeeper) coordinates replication across replicas, while sharding splits data horizontally across nodes allowing the cluster to scale reads and writes independently. The result is a database that processes hundreds of millions of rows per second per server, making it the default choice for teams running serious analytics workloads.

The Real Problem: It’s Not ClickHouse, It’s the Packaging

The standard `clickhouse/clickhouse-server` image is built on a full Ubuntu 22.04 base. The base ships with a lot of things ClickHouse doesn’t need such as Perl, system utilities, apt itself, and dozens of transitive dependencies that exist in the image simply because Ubuntu brought outdated package along and in many cases, Ubuntu maintainers decide to not backport fixes from upstream.

ClickHouse doesn’t use most of those system utilities. But the CVEs in those packages are real. They show up in Trivy, Grype, and AWS ECR has no way to distinguish a vulnerable library that’s never loaded from one that’s actively running in production. Your security team sees critical findings and blocks the deployment, which is the correct thing for them to do given what the scanner is telling them.

The instinct at this point is to argue the case, documenting why each CVE doesn’t apply to your workload, writing risk exceptions and escalating, but that’s a slow process. The only real fix is to remove those unnecessary packages entirely. That’s what Docker Hardened Images do.

What DHI Actually Changes

Docker Hardened Images for ClickHouse are built around a straightforward question: what does the database actually need to run? Rather than starting from a full Ubuntu base and hoping the CVE count stays manageable, DHI ships only what ClickHouse requires and leaves everything else out.

The most immediate consequence of that is the absence of `apt` at runtime. Without a package manager, an attacker who gains a foothold in the container has no obvious path to installing tools or establishing persistence. Network utilities like `curl` and `wget` are gone for the same reason, the standard `clickhouse/clickhouse-server` image has been carrying wget with `CVE-2021-31879` unpatched since 2021 because there is no upstream fix as noted by the Ubuntu maintainer, a vulnerability in a tool ClickHouse never needed in the first place. DHI doesn’t patch it; it simply doesn’t include `wget` at all. A shell is still available for operational work, but without the package manager and network tools, there’s very little an attacker can actually do with it.

To make this practical across different stages of a pipeline, DHI ships two variants. The development image (dev) includes additional tooling that makes local testing and debugging more comfortable. The production image (runtime) strips that back to the absolute minimum, giving you the smallest possible attack surface for the workload that actually faces the world. The intent is that teams adopt the dev variant early in the pipeline and promote the hardened production image through to deployment, rather than discovering the differences at the point where it matters most.

The image also runs as a non-root user `uid=65532` out of the box, with no additional Dockerfile configuration required. On the provenance side, every DHI image ships with SLSA Level 3 attestation, which provides cryptographic proof of exactly what went into the build and how it was produced. Docker’s security team actively tracks and patches CVEs, and the presence of 2026 CVE IDs in DHI’s findings is evidence of that remediation happening ahead of public disclosure feeds rather than in response to them.

Getting Started

Before you can pull a DHI image, you need to mirror it to your organization’s namespace on Docker Hub. This is a one-time setup per image not per tag and it means all future updates flow to your namespace automatically.

1. Log in to Docker Hub and open the DHI catalog 2. Find `clickhouse-server` and select **Mirror to repository** 3. Follow the on-screen instructions 4. Authenticate locally: `docker login dhi.io`

Once that’s done, you’re pulling from your own namespace with the same image, same tags, same ClickHouse – just hardened.

Your first DHI ClickHouse container

`docker run --name my-clickhouse-server -d \`

The `--ulimit nofile=262144:262144` flag is a ClickHouse requirement, not a DHI one – ClickHouse needs high file descriptor limits to operate correctly. Keep it in all your run commands.

Verify it started:

`docker``exec``my-clickhouse-server clickhouse-client \`

### Production setup with persistent storage

For anything beyond local testing, you want volumes and a password:

`docker run -d \`

Note that `CLICKHOUSE_PASSWORD` is required if you want to access ClickHouse over the network. DHI disables unauthenticated network access by default which is the right call for any production deployment.

Test it over HTTP:

`curl``"http://localhost:8123/?query=SELECT%20version()&user=default&password=mysecretpassword"`

Custom configuration

If you’re already running ClickHouse with custom XML config, nothing changes. Same format, same mount path:

`cat``> custom-config.xml << EOF`

`<clickhouse>`

`<``/clickhouse``>`

`EOF`

`docker run -d \`

Running DHI ClickHouse on Kubernetes

For Kubernetes, there’s one important addition to your pod spec. Since DHI runs as a non-root user, you need to set `fsGroup` to ensure your persistent volume data is accessible:

`spec:`

One thing worth mentioning: ClickHouse’s default ports 8123 and 9000 are above the 1024 privileged port boundary, so running as nonroot doesn’t cause any port binding issues.

### The metrics exporter

If you’re running ClickHouse on Kubernetes and need Prometheus metrics, Docker also ships `clickhouse-metrics-exporter` – a hardened image that works with the ClickHouse Operator to expose a `/metrics` endpoint. It’s 65% smaller than the standard exporter (10.3 MB vs 29.4 MB) and has 75% fewer layers (5 vs 20). Same data, dramatically smaller surface.

`containers:`

`- name: metrics-exporter`

Debugging without the usual tools

The debugging story is simpler than it might seem. `docker debug` attaches an ephemeral layer to the running container that includes bash, curl, strace, vim, and anything else you need without modifying the production image itself. When you exit, the layer disappears and the container is exactly as it was. It’s a cleaner approach than shelling directly into a production container, and in practice it’s a single command:

`docker debug my-clickhouse-server`

Or if you prefer, you can mount a debug image alongside the container:

`docker run --``rm``-it --pid container:my-clickhouse-server \`

There’s also a broader security benefit that goes beyond CVE counts. If something does go wrong in production, an attacker who gets into the container finds no package manager to install tools with, no curl or wget to exfiltrate data through, and no obvious path to reach out to the network which significantly limits what a compromise can actually turn into.

ClickHouse: Non-hardened Image vs. Hardened Image Compared

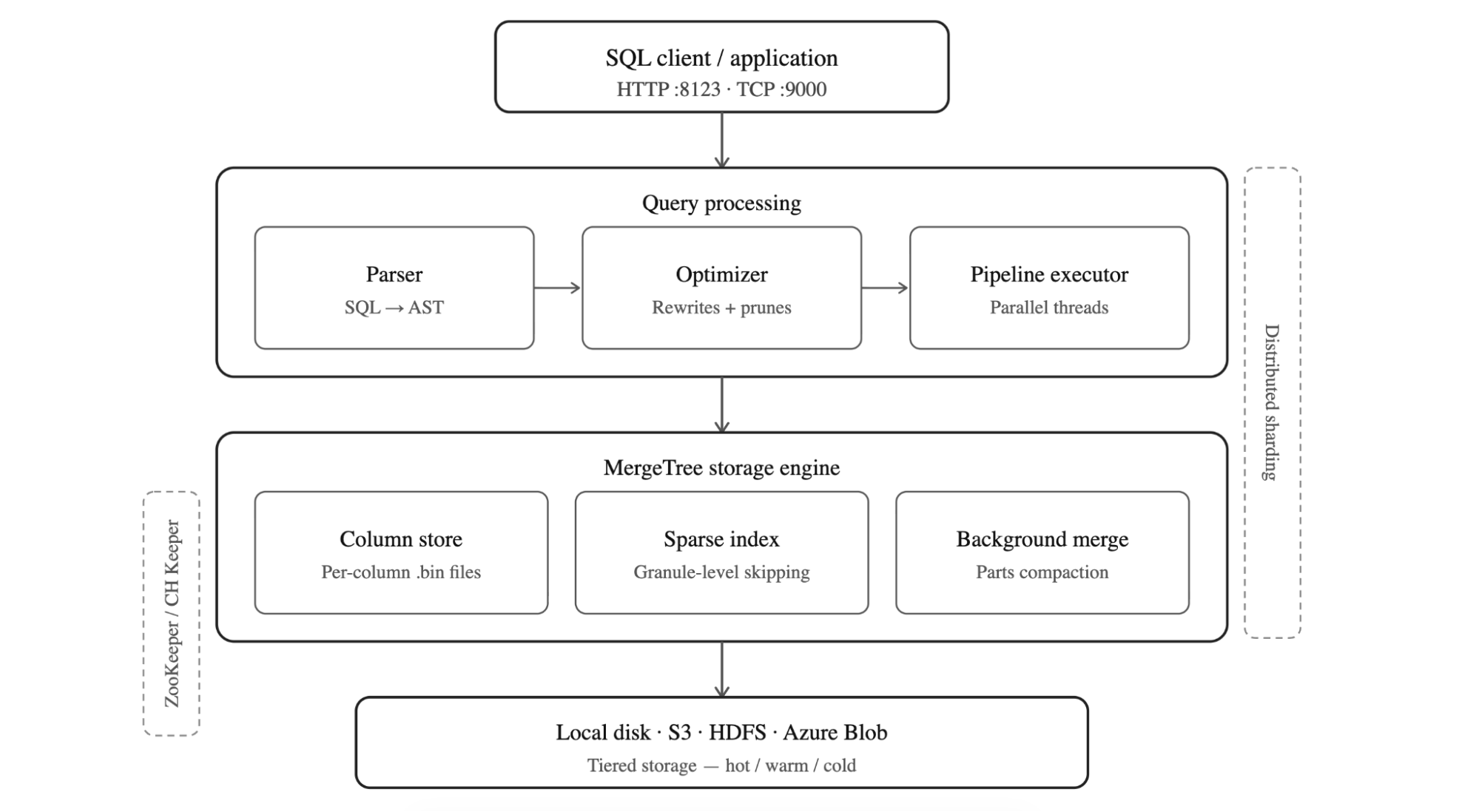

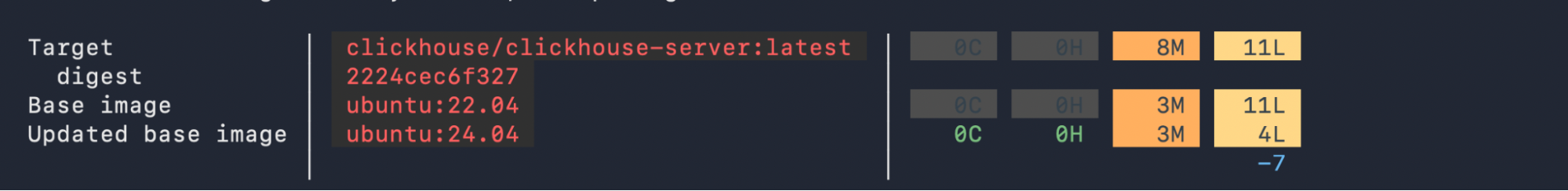

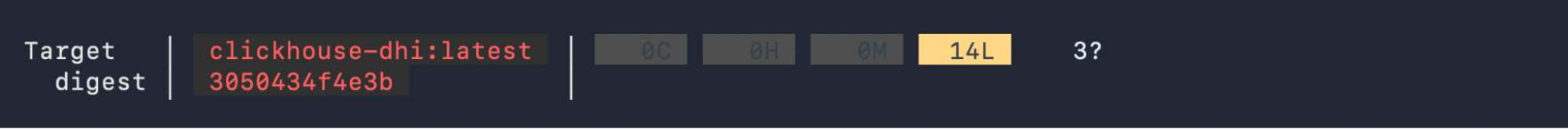

A Docker Scout scan of both images puts the difference in plain numbers. Using `ubuntu:22.04` as its base, the standard image carries `8` medium and `11` low severity vulnerabilities across 111 packages, including the wget and tar findings that are most likely to trigger a security block in an enterprise pipeline. The DHI image eliminates all medium severity findings entirely and comes in at `14` low severity items but these are in core system libraries like `glibc` and `openssl` where no fix exists on any distribution, not in unnecessary utilities that had no business being in the image. The `3` unconfirmed findings that Scout surfaces have already been assessed and suppressed via VEX attestation, which ships with the image as part of its SLSA Level 3 provenance

To view the difference between versions for any other image, you can run your own scan with Docker Scout for a quick comparison using this command:

`docker scout quickview clickhouse``/clickhouse-server``:latest`

`docker pull dhi.io``/clickhouse-server``:26.2-debian13`

`docker tag dhi.io``/clickhouse-server``:26.2-debian13 clickhouse-dhi:latest`

`docker scout quickview clickhouse-dhi:latest`

| | Non-Hardened ClickHouse Image | Docker Hardened Image | | --- | --- | --- | | Default user | Non-Hardened ClickHouse Image root (steps down to clickhouse user at runtime via entrypoint, but Dockerfile has no USER directive overridable with CLICKHOUSE_RUN_AS_ROOT=1) | Docker Hardened Image nonroot (enforced at image level via USER directive cannot be overridden at runtime) | | Shell access | Non-Hardened ClickHouse Image Full shell (bash/sh) available | Docker Hardened Image bash present, no network tools or package manager | | Package manager | Non-Hardened ClickHouse Image apt available | Docker Hardened Image No package manager | | CVE exposure | Non-Hardened ClickHouse Image Ships wget (CVE-2021-31879, unpatched since 2021), tar (CVE-2025-45582) | Docker Hardened Image No wget, no tar – unnecessary packages removed entirely | | CVE patching | Non-Hardened ClickHouse Image Unpatched findings from 2021–2025 due to the lack of upstream fixes from Ubuntu base image. | Docker Hardened Image Actively tracked, 2026 CVE IDs show proactive remediation | | Provenance | Non-Hardened ClickHouse Image Standard | Docker Hardened Image SLSA Level 3 attestation | | Compliance | Non-Hardened ClickHouse Image Manual hardening required | Docker Hardened Image CIS, NIST, FedRAMP-aligned | | Debugging | Non-Hardened ClickHouse Image Traditional shell debugging | Docker Hardened Image Use docker debug or Image Mount for troubleshooting |

The Security Team Conversation

The team that got blocked at AWS ECR in November 2025 didn’t have a ClickHouse problem, they had a base image problem. Their database was fine; what the scanner was finding were CVEs in Perl, system utilities, and other packages that had come along in the Debian base and never used by the application. Nothing in the scanner output made that distinction, so the security team did exactly what they were supposed to do and blocked the deployment.

With DHI, that conversation with your security team becomes considerably more straightforward. Rather than building a case for why specific CVEs don’t apply to your workload, you can point to an image built by Docker’s security team from the minimum required components, with SLSA Level 3 provenance and independent validation by SRLabs. The ClickHouse runtime itself is unchanged ~ queries, ports, configuration files, and performance all carry over so the only thing you’re actually changing is the answer you can give when someone asks whether this image can go to production.For teams that need stronger guarantees, DHI Enterprise adds SLA-backed CVE remediation within seven days, FIPS and STIG variants, and extended lifecycle support. For most teams, the free Enterprise trial is the right starting point. It answers the question that actually matters before you commit to anything. Interested to learn further? Start with this blog that walks through the trial and sets you up for success.

Migration Checklist

`☐ Mirror clickhouse-server DHI image to your Docker Hub namespace (one-time setup)`

`☐ Update your image reference to dhi.io/clickhouse-server:26.2-debian13`

`☐ Set CLICKHOUSE_PASSWORD (required for network access in DHI)`

`☐ Keep --ulimit nofile=262144:262144 on all run commands`

`☐ In Kubernetes: add fsGroup: 65532 to your pod securityContext`

`☐ Switch from kubectl exec to kubectl debug for troubleshooting`

`☐ Run trivy against both images to see the difference yourself:`

The migration is narrower in scope than it might appear – your volume mounts, port mappings, and existing XML configuration files all carry over without modifications, and on Kubernetes the only structure addition is the fsGroup security context. Everything else is an image reference change.

Resources

- Docker Hardened Images Documentation

- DHI ClickHouse Server Guide

- DHI ClickHouse Metrics Exporter Guide

- Docker Debug Documentation

- Free DHI Catalog

- DHI Community Announcement

- Docker Scout Documentation

About the Authors

Developer Advocate, Docker

Ajeet Singh Raina, Developer Advocate at Docker, writes and speaks on containers, Docker Compose & AI, helping devs build confidently.

Senior Developer Relations Advocate, ClickHouse

CVEDevSecOpsDocker Hardened ImagessecurityCommunityPartnerships

Table of contents

- A Quick Word on ClickHouse

- The Real Problem: It’s Not ClickHouse, It’s the Packaging

- What DHI Actually Changes

- Getting Started

- Running DHI ClickHouse on Kubernetes

- Debugging without the usual tools

- ClickHouse: Non-hardened Image vs. Hardened Image Compared

- The Security Team Conversation

- Migration Checklist

- Resources

[](https://www.linkedin.com/sharing/share-offsite/?url=https%3A%2F%2Fwww.docker.com%2Fblog%2Ffrom-security-blocked-to-prod-ready-clickhouse-on-docker-hardened-images%2F "Visit this Linkedin profile")[](https://twitter.com/intent/tweet?url=https%3A%2F%2Fwww.docker.com%2Fblog%2Ffrom-security-blocked-to-prod-ready-clickhouse-on-docker-hardened-images%2F "Visit this X profile")[](https://www.facebook.com/sharer/sharer.php?u=https%3A%2F%2Fwww.docker.com%2Fblog%2Ffrom-security-blocked-to-prod-ready-clickhouse-on-docker-hardened-images%2F "Visit this Facebook profile")

Related Posts

- Mar 31, 2026 #### Docker Sandboxes: Run Agents in YOLO Mode, Safely Agents have crossed a threshold. Over a quarter of all production code is now AI-authored, and developers who use agents are merging roughly 60% more pull requests. But these gains only come when you let agents run autonomously. And to unlock that, you have to get out of the way. That means letting agents run…

- Apr 23, 2026 #### Trivy, KICS, and the shape of supply chain attacks so far in 2026 We caught a malicious image pushed to checkmarx/kics on Docker Hub, the image was quarantined, and we coordinated response with Socket and Checkmarx. This blog walks through what happened and why we believe open, fast collaboration is the key to responding to this new pattern of emerging supply chain attacks.

- Apr 16, 2026 #### Why MicroVMs: The Architecture Behind Docker Sandboxes Last week, we launched Docker Sandboxes with a bold goal: to deliver the strongest agent isolation in the market. This post unpacks that claim, how microVMs enable it, and some of the architectural choices we made in this approach. The Problem With Every Other Approach Every sandboxing model asks you to give something up. We…

- Apr 14, 2026 #### Why We Chose the Harder Path: Docker Hardened Images, One Year Later We’re coming up on a year since launching Docker Hardened Images (DHI) this May, and in this blog we celebrate the milestones, talk about our approach, and look at some practices in the industry.

Products

- Products Overview

- Docker Desktop

- Docker Hub

- Docker Scout

- Docker Build Cloud

- Testcontainers Desktop

- Testcontainers Cloud

- Docker MCP Catalog and Toolkit

- Docker Hardened Images

Features

- Command Line Interface

- IDE Extensions

- Container Runtime

- Docker Extensions

- Trusted Open Source Content

- Secure Software Supply Chain

Developers

Pricing

Company

- About Us

- What is a Container

- Blog

- Why Docker

- Trust

- Customer Success

- Partners

- Events

- Docker System Status

- Newsroom

- Swag Store

- Brand Guidelines

- Trademark Guidelines

- Careers

- Contact Us

Languages

- [](http://twitter.com/docker)

- [](https://www.linkedin.com/company/docker)

- [](https://www.instagram.com/dockerinc/)

- [](http://www.youtube.com/user/dockerrun)

- [](https://www.facebook.com/docker.run)

- [](http://www.docker.com/blog/feed)

© 2026 Docker Inc. All rights reserved

Do Not Sell My Personal Information

This website uses cookies to enhance user experience and to analyze performance and traffic on our website. We also share information about your use of our site with our social media, advertising and analytics partners.

Do Not Sell My Personal Information Accept Cookies

Do Not Sell My Personal Information

When you visit our website, we store cookies on your browser to collect information. The information collected might relate to you, your preferences or your device, and is mostly used to make the site work as you expect it to and to provide a more personalized web experience. However, you can choose not to allow certain types of cookies, which may impact your experience of the site and the services we are able to offer. Click on the different category headings to find out more and change our default settings according to your preference. You cannot opt-out of our First Party Strictly Necessary Cookies as they are deployed in order to ensure the proper functioning of our website (such as prompting the cookie banner and remembering your settings, to log into your account, to redirect you when you log out, etc.). For more information about the First and Third Party Cookies used please follow this link.

Allow All

Manage Consent Preferences

#### Strictly Necessary Cookies

Always Active

These cookies are necessary for the website to function and cannot be switched off in our systems. They are usually only set in response to actions made by you which amount to a request for services, such as setting your privacy preferences, logging in or filling in forms. You can set your browser to block or alert you about these cookies, but some parts of the site will not then work. These cookies do not store any personally identifiable information.

#### Sale of Personal Data

- [x] Sale of Personal Data

Under the California Consumer Privacy Act, you have the right to opt-out of the sale of your personal information to third parties. These cookies collect information for analytics and to personalize your experience with targeted ads. You may exercise your right to opt out of the sale of personal information by using this toggle switch. If you opt out we will not be able to offer you personalised ads and will not hand over your personal information to any third parties. Additionally, you may contact our legal department for further clarification about your rights as a California consumer by using this Exercise My Rights link.

If you have enabled privacy controls on your browser (such as a plugin), we have to take that as a valid request to opt-out. Therefore we would not be able to track your activity through the web. This may affect our ability to personalize ads according to your preferences.

- ##### Performance Cookies

- [x] Switch Label label

These cookies allow us to count visits and traffic sources so we can measure and improve the performance of our site. They help us to know which pages are the most and least popular and see how visitors move around the site. All information these cookies collect is aggregated and therefore anonymous. If you do not allow these cookies we will not know when you have visited our site, and will not be able to monitor its performance.

- ##### Targeting Cookies

- [x] Switch Label label

These cookies may be set through our site by our advertising partners. They may be used by those companies to build a profile of your interests and show you relevant adverts on other sites. They do not store directly personal information, but are based on uniquely identifying your browser and internet device. If you do not allow these cookies, you will experience less targeted advertising.

Cookie List

Clear

- [x] checkbox label label

Apply Cancel

Consent Leg.Interest

- [x] checkbox label label

- [x] checkbox label label

- [x] checkbox label label

Confirm My Choices

问问这篇内容

回答仅基于本篇材料Skill 包

领域模板,一键产出结构化笔记论文精读包

把一篇论文 / 技术博客精读成结构化笔记:问题、方法、实验、批判、延伸阅读。

- · TL;DR(1 段)

- · 研究问题与动机

- · 方法概览

投融资雷达包

把一条融资 / 创投新闻整理成投资人视角的雷达卡:交易要点、判断、竞争格局、风险、尽调清单。

- · 交易要点(公司 / 轮次 / 金额 / 投资人 / 估值,材料未明示则写 “未披露”)

- · 投资 thesis(这家公司为什么值得关注)

- · 竞争格局与替代方案