Announcing the Public Preview of Lakeflow Designer

Announcing the Public Preview of Lakeflow Designer | Databricks Blog

[](http://www.databricks.com/)

[](http://www.databricks.com/)

- Why Databricks

- * Discover

- Customers

- Partners

- Product

- * Databricks Platform

- Integrations and Data

- Pricing

- Open Source

- Solutions

- * Databricks for Industries

- Cross Industry Solutions

- Migration & Deployment

- Solution Accelerators

- Resources

- * Learning

- Events

- Blog and Podcasts

- Get Help

- Dive Deep

- About

- * Company

- Careers

- Press

- Security and Trust

- DATA + AI SUMMIT

Table of contents

- What is Lakeflow Designer?

- What makes Lakeflow Designer different?

- How teams are using Lakeflow Designer

- Getting started

Table of contents

Table of contents

- What is Lakeflow Designer?

- What makes Lakeflow Designer different?

- How teams are using Lakeflow Designer

- Getting started

PlatformApril 23, 2026

Announcing the Public Preview of Lakeflow Designer

A no-code, AI-native, fully governed experience for data preparation on Databricks

by Jason Messer, Emanuel Zgraggen, V Maharajh, Matt Jones and Tracy Yang

Summary

- Lakeflow Designer is now in Public Preview, giving Databricks users a visual, no-code, AI-native way to prepare and analyze data.

- Built directly on Databricks and governed by Unity Catalog, Lakeflow Designer keeps data in place while providing lineage, permissions, and production-ready code from day one.

- Lakeflow Designer helps make AI-generated transformations easier to review and trust by breaking work into visual operators with step-by-step previews of how data changes.

We first introduced Lakeflow Designer at Data and AI Summit last year. Since then, we’ve worked closely with early customers to refine the product and better understand where it is most useful. Today, we’re excited to announce the **Public Preview of Lakeflow Designer**. Lakeflow Designer removes one of the biggest bottlenecks in data today: the technical barrier to entry.

What is Lakeflow Designer?

Lakeflow Designer is a visual, no-code, AI-native experience for data preparation and analytics. Built directly in Databricks, it lets analysts, domain experts, and other less technical users prepare and explore data through a drag-and-drop canvas and natural language.

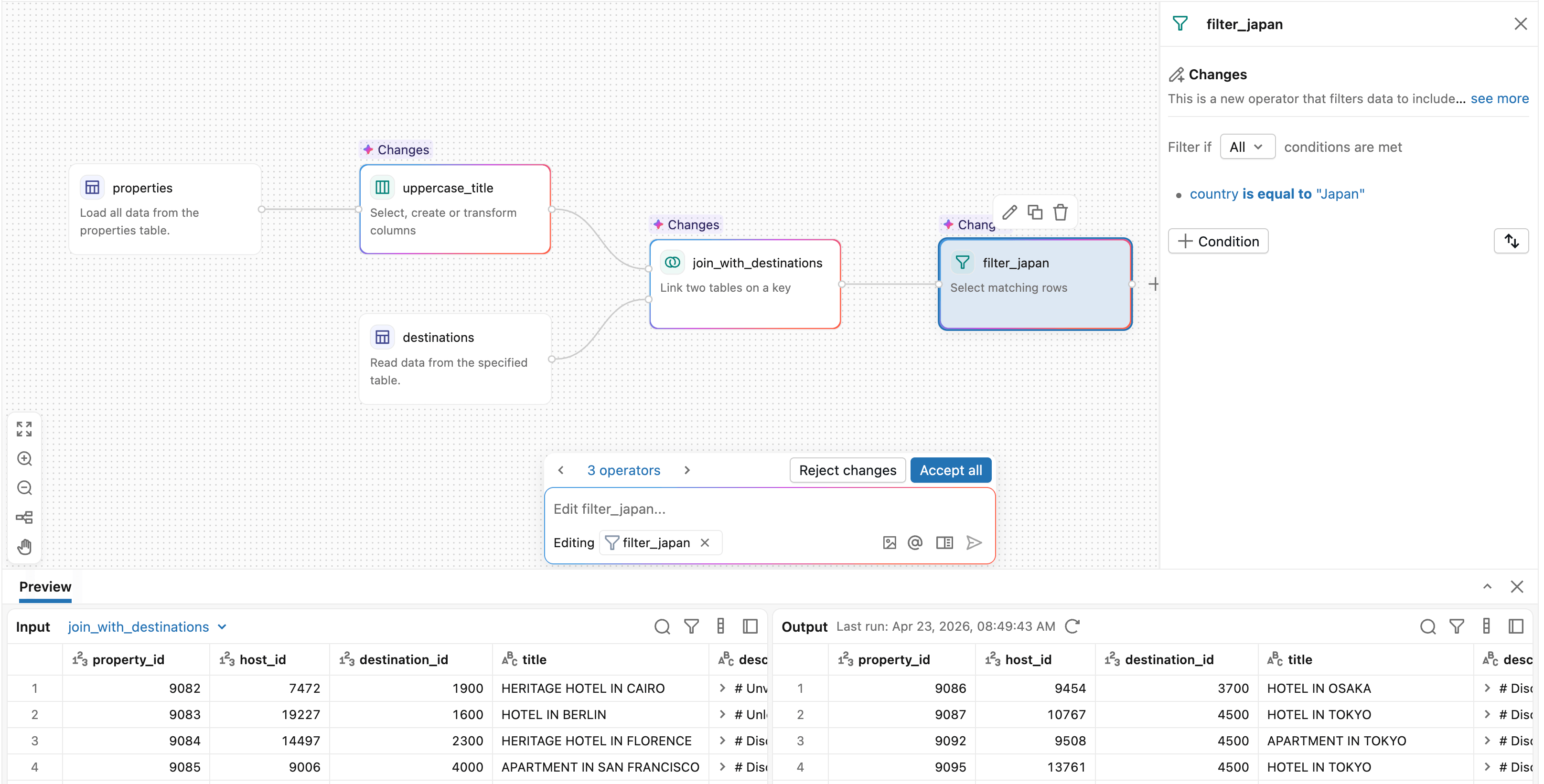

Each step in Lakeflow Designer is represented as an operator, giving users a clear picture of how data changes throughout the workflow. This makes it easier to build, validate, and understand transformations as you go.

Lakeflow Designer extends the power of Databricks Lakeflow to a broader set of users, enabling no-code data preparation while still generating production-ready code under the hood. Workflows can be scheduled and operationalized through Lakeflow Jobs, making it easy to move from interactive data prep to production pipelines.

Lakeflow Designer expands autonomy for business teams, enabling the efficient creation of data views through natural language and best practices, while ensuring data consistency, governance, and reliability. — Phelipe Naman, Data & Analytics Architecture Tech Lead, Sabesp

What makes Lakeflow Designer different?

Self-service data prep is not a new idea, but existing tools sit outside your central data platform. That comes with tradeoffs:

- Disconnect between the data prep tool and the data platform creates governance gaps and additional IT overhead

- AI is bolted on and suggestions are generic because the tool has no real understanding of the data

- Visual workflows are difficult to productionize, with logic often trapped in domain-specific languages or the UI

- Per-user licensing is expensive and limits who has access

Lakeflow Designer takes a different approach.

**1. Built natively on Databricks for governance and simplicity**

Lakeflow Designer runs directly where your data already lives - on Databricks. There’s no need to move data into a separate tool or onto your local machine. Data remains in place, governed by Unity Catalog from the start, while simplifying the overall data stack. Instead of managing a separate low-code tool with its own licensing, permissions, and administration model, organizations can enable self-service work directly within Databricks.

KPMG UK delivers audit and assurance services to thousands of companies - each with a different data landscape. Equipping our practitioners with Lakeflow Designer enables a visual, low-code and AI assisted workflow that scales and democratises our ability to translate complex and varied data sets into meaningful insights. — Mark Wallington, Audit Data and AI Partner, KPMG UK

_Start working with native source data right away_

**2. Built from the ground up for AI, and designed to make AI reviewable**

Lakeflow Designer is built on Genie Code, Databricks’ native agentic coding assistant. AI is not an add-on here. It is core to how the product works. Simply describe what you want in plain English, and Genie Code can generate or modify the workflow directly.

_AI-native authoring that just works_

Because Lakeflow Designer is embedded directly in the Databricks workspace, Genie Code can reason over more than just column names. It can use Unity Catalog metadata, table descriptions, lineage, popularity, and example queries to understand the semantic meaning of data and identify the right assets for a task. This leads to more context-aware and accurate suggestions than tools that only see the schema.

This architecture also opens the door to more agentic behavior. Rather than generating a static result once, the system can execute a transformation, inspect the output, and iterate when needed. For example, if a join fails or returns no rows, Genie Code can evaluate the result and try an alternative approach.

Perhaps just as importantly, Lakeflow Designer makes AI-generated transformations easy to understand and validate by breaking them into discrete visual operators with data previews at every step. You can see exactly what changed, where rows were filtered, how a join was resolved, and what the output looks like before moving on.

Lakeflow Designer is a key enabler for scaling data engineering beyond the core technical team on Databricks. By providing a visual interface integrated with natural language capabilities, it helps reduce the “SQL bottleneck,” allowing business teams to prototype and iterate on pipelines with greater autonomy. This goes beyond ease of use - it’s about organizational alignment. When transformations are visual and accessible, the gap between business intent and technical execution narrows, accelerating the journey from raw data to actionable insights. — Matheus Polycaropo, Data Engineering Leader, Serasa Experian

**3. Every visual transformation generates real, production-ready code**

Every transformation in Lakeflow Designer generates production-ready Python code under the hood. That code can be reviewed, versioned in Git, and integrated directly into larger production workflows. Over time, Designer will also support more native production outputs, such as materialized views. This ultimately reduces one of the biggest costs of self-service tools: handing off work to engineering to rebuild for production. Instead of redoing the work in another system, central data teams can build on what users have already created.

**4. No per-user licenses**

One of the biggest adoption barriers we’ve seen in traditional low-code tools is pricing. Seat-based licensing forces teams to decide upfront which users are worth giving access to, slowing adoption and limiting self-service before it even starts.

With Lakeflow Designer, there is no per-user license model. You only pay for the compute you use. Everyone across the business can participate in data work without creating a new procurement bottleneck.

How teams are using Lakeflow Designer

We’re already seeing hundreds of teams across industries use Lakeflow Designer to prepare and work with data in ways that were previously difficult to scale without engineering support.

For example:

- **Consulting and professional services teams** use Lakeflow Designer to clean client data from spreadsheets, PDFs, and shared files, then apply repeatable audit or analytics workflows to produce reports.

- **Financial services organizations** use Lakeflow Designer for self-service data preparation, regulatory reporting, and risk analysis.

- **Business teams across marketing, operations, and logistics** use it to combine data from multiple sources, answer operational questions, and prepare data for dashboards.

We’re also seeing Lakeflow Designer play an important role across the broader Databricks platform. Teams are using it to prepare data that flows into Metric Views and AI/BI dashboards, creating a complete self-service loop. Analysts can go from raw tables to polished dashboards without writing code.

With the adoption of Lakeflow Designer, we simplified the construction of data pipelines and elevated the quality of analyses through low-code development and AI capabilities powered by natural language. Non-technical teams began creating complex analytical processes autonomously-generating real business value and accelerating decision-making. More than that, the platform enabled us to scale a data-driven culture across the company, expanding the reach of advanced analytics to more areas and democratizing access to data intelligence throughout the organization. — Carlos Gumz, Data Lead, Hering

Getting started

Designer is currently available in all workspaces To get started, click the **+ New** button in the top left of the workspace and select **Visual data prep**. If you do not see the **Visual data prep** option, Designer may need to be enabled by an admin in the preview portal.

Here are some other next steps you can take with Lakeflow Designer:

- Watch an 8-minute demo video of Lakeflow Designer

- Check out the documentation page for more detailed resources on getting started with Lakeflow Designer.

- Customer feedback continues to shape the product and play a key role in our roadmap. If you have any feedback or questions, we would love to hear from you at [designer-feedback@databricks.com](mailto:designer-feedback@databricks.com).

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.

Sign up

*

Work Email

*

Country Country*

By clicking “Subscribe” I understand that I will receive Databricks communications, and I agree to Databricks processing my personal data in accordance with its Privacy Policy.

Subscribe

What's next?

→

November 20, 2024/4 min read

#### Introducing Predictive Optimization for Statistics

→

November 21, 2024/3 min read

#### How to present and share your Notebook insights in AI/BI Dashboards

Why Databricks

Discover

Customers

Partners

Why Databricks

Discover

Customers

Partners

Product

Databricks Platform

- Platform Overview

- Sharing

- Governance

- Artificial Intelligence

- Business Intelligence

- Database

- Data Management

- Data Warehousing

- Data Engineering

- Data Science

- Application Development

- Security

Pricing

Integrations and Data

Product

Databricks Platform

- Platform Overview

- Sharing

- Governance

- Artificial Intelligence

- Business Intelligence

- Database

- Data Management

- Data Warehousing

- Data Engineering

- Data Science

- Application Development

- Security

Pricing

Open Source

Integrations and Data

Solutions

Databricks For Industries

- Communications

- Financial Services

- Healthcare and Life Sciences

- Manufacturing

- Media and Entertainment

- Public Sector

- Retail

- View All

Cross Industry Solutions

Solutions

Databricks For Industries

- Communications

- Financial Services

- Healthcare and Life Sciences

- Manufacturing

- Media and Entertainment

- Public Sector

- Retail

- View All

Cross Industry Solutions

Data Migration

Professional Services

Solution Accelerators

Resources

Learning

Events

Blog and Podcasts

Resources

Documentation

Customer Support

Community

Learning

Events

Blog and Podcasts

About

Company

Careers

Press

About

Company

Careers

Press

Security and Trust

Databricks Inc.

160 Spear Street, 15th Floor

San Francisco, CA 94105

1-866-330-0121

- [](https://www.linkedin.com/company/databricks)

- [](https://www.facebook.com/pages/Databricks/560203607379694)

- [](https://twitter.com/databricks)

- [](https://www.databricks.com/feed)

- [](https://www.glassdoor.com/Overview/Working-at-Databricks-EI_IE954734.11,21.htm)

- [](https://www.youtube.com/@Databricks)

- [](https://www.linkedin.com/company/databricks)

- [](https://www.facebook.com/pages/Databricks/560203607379694)

- [](https://twitter.com/databricks)

- [](https://www.databricks.com/feed)

- [](https://www.glassdoor.com/Overview/Working-at-Databricks-EI_IE954734.11,21.htm)

- [](https://www.youtube.com/@Databricks)

© Databricks 2026. All rights reserved. Apache, Apache Spark, Spark, the Spark Logo, Apache Iceberg, Iceberg, and the Apache Iceberg logo are trademarks of the Apache Software Foundation.

- Privacy Notice

- |Terms of Use

- |Modern Slavery Statement

- |California Privacy

- |Your Privacy Choices

- !Image 18

We Care About Your Privacy

Databricks uses cookies and similar technologies to enhance site navigation, analyze site usage, personalize content and ads, and as further described in our Cookie Notice. To disable non-essential cookies, click “Reject All”. You can also manage your cookie settings by clicking “Manage Preferences.”

Manage Preferences

Reject All Accept All

Privacy Preference Center

Opt-Out Preference Signal Honored

Privacy Preference Center

- ### Your Privacy

- ### Strictly Necessary Cookies

- ### Performance Cookies

- ### Functional Cookies

- ### Targeting Cookies

- ### TOTHR

#### Your Privacy

When you visit any website, it may store or retrieve information on your browser, mostly in the form of cookies. This information might be about you, your preferences or your device and is mostly used to make the site work as you expect it to. The information does not usually directly identify you, but it can give you a more personalized web experience. Because we respect your right to privacy, you can choose not to allow some types of cookies. Click on the different category headings to find out more and change our default settings. However, blocking some types of cookies may impact your experience of the site and the services we are able to offer.

#### Opting out of sales, sharing, and targeted advertising

Depending on your location, you may have the right to opt out of the “sale” or “sharing” of your personal information or the processing of your personal information for purposes of online “targeted advertising.” You can opt out based on cookies and similar identifiers by disabling optional cookies here. To opt out based on other identifiers (such as your email address), submit a request in our Privacy Request Center.

#### Strictly Necessary Cookies

Always Active

These cookies are necessary for the website to function and cannot be switched off in our systems. They assist with essential site functionality such as setting your privacy preferences, logging in or filling in forms. You can set your browser to block or alert you about these cookies, but some parts of the site will no longer work.

#### Performance Cookies

- [x] Performance Cookies

These cookies allow us to count visits and traffic sources so we can measure and improve the performance of our site. They help us to know which pages are the most and least popular and see how visitors move around the site.

#### Functional Cookies

- [x] Functional Cookies

These cookies enable the website to provide enhanced functionality and personalization. They may be set by us or by third party providers whose services we have added to our pages. If you do not allow these cookies then some or all of these services may not function properly.

#### Targeting Cookies

- [x] Targeting Cookies

These cookies may be set through our site by our advertising partners. They may be used by those companies to build a profile of your interests and show you relevant advertisements on other sites. If you do not allow these cookies, you will experience less targeted advertising.

#### TOTHR

- [x] TOTHR

Cookie List

Consent Leg.Interest

- [x] checkbox label label

- [x] checkbox label label

- [x] checkbox label label

Clear

- - [x] checkbox label label

Apply Cancel

Confirm My Choices

Allow All